PsyOps and Socialbots

Introduction

Social media are the principal aggregation "places" in cyberspace; billions of connected people are using it for a wide variety of purposes from gaming to socialization.

The high penetration level of social media makes these platforms privileged targets for cybercriminal activities and intelligence analysis. In many cases, both aspects come together and operate in a manner similar to the pursuit of the same objectives.

As described in my previous article "Social Media Use in the Military Sector," social media are actively attended by state-sponsored actors and governments to support military operations such as:

- Psychological operations (PsyOps)

- OSInt

- Cyber espionage

- Offensive purposes

This post is focused on the use of social media platforms to conduct psychological operations or, rather, to manipulate the opponent's perception.

Psychological Operations (PsyOps)

Military doctrine includes the possibility of exploiting the wide audiences of social media to conduct psychological operations (PsyOps) in a context of information warfare with the primary intent to influence the "sentiment" of large masses (e.g., emotions, motives, objective reasoning).

PsyOps is defined by the U.S. military as "planned operations to convey selected truthful information and indicators to foreign audiences to influence their emotions, motives, objective reasoning, and ultimately, the behavior of their governments, organizations, groups, and individuals."

"Psychological operations" is not a new concept. In the past, on many occasions and in different periods, the military has used the diffusion of information to interfere with opponents divulging artifact information or propaganda messages. Governments have used secret agents infiltrated behind the enemy lines or launched leaflets with a plane over enemy territories; today, social networks have the advantage over these types of missions.

The terms "psychological operations" and "psychological warfare" are often used interchangeably; "psychological warfare" was first used in 1920 and "psychological operations" in 1945. Distinguished theorists like Sun Tzu have highlighted the importance of waging psychological warfare:

"One need not destroy one's enemy. One only needs to destroy his willingness to engage…"

"For to win one hundred victories in one hundred battles is not the acme of skill. To subdue the enemy without fighting is the supreme excellence." – Sun Tzu

Governments consider PsyOps a crucial option for diplomatic, military, and economic activities, the use of new-generation media and large-diffusion platforms such as the mobile and social media gives governments a powerful instrument to reach critical masses instantly.

PsyOps consist in conveying messages to selected groups, known as target audiences, to promote particular themes that result in desired attitudes and behavior that affect the achievement of political and military objectives. NATO promoted a doctrine for psychological operations defining target audience as "an individual or group selected for influence or attack by means of psychological operations."

In the document, "Allied Joint Doctrine for Psychological Operations AJP-3.10.1(A)," NATO has highlighted the possibility of supporting military operations with PsyOps following three basic objectives:

- Weaken the will of the adversary or potential adversary target audiences.

- Reinforce the commitment of friendly target audiences.

- Gain the support and cooperation of uncommitted or undecided audiences.

Obviously, psychological operations must be contextualized in our time. Today they can benefit from the advanced state of technology at our disposal: the Internet, virtual reality, blogs, video games, chat bots, and, of course, social network platforms could be used by military for various purposes.

The military sector is investing largely in PsyOps professionals; it is exploring these technologies to influence individuals to support their cause or the sentiment of an entire population.

To influence common sentiment on specific topics, intelligence agencies arrange political and geopolitical campaigns using impressive amounts of data to induce specifically crafted information, fake or not, on the masses. Intelligence agencies use social media networks as a vector of the information flow; dedicated content is created to raise the attention of topics of interest and various discussions are carried on to involve an increasing number of users. Just the dynamicity of discussions represents the primary element of innovation in modern PsyOps: These discussions are structured with ad hoc comments and posts and they are used to sensitize and influence the user's perception of events.

The principal advantages of using social media for PsyOps operations are:

- Social media can reach a wide audience instantly and speed is an essential factor in PSYOPs.

- Social media can reach individuals difficult to reach in other ways, thanks to the high penetration level of the Internet technology.

- The information being presented can be easily modified and changed in the cyber domain to address the target audience.

- Flexible and persuasive technologies are interactive and make it possible for an attacker to tailor operations for highly dynamic situations.

- Cyber and persuasive technologies can grant anonymity.

- Automated PsyOps on social media are more persistent and efficient than humans.

- PsyOps is a cheap means of dissemination.

As specified in NATO doctrine, there are also various disadvantages in conducting PsyOps as a military option:

- Impossibility of limiting the availability of information published to selected audiences, unless it is sent directly to the target audience as e-mail. This causes a further effort to minimize the negative impact of operations on unintended target audiences.

- Target audience has to be able to access the Internet.

- The PsyOps messages have to appeal to the target audiences much more than in most other media; this requires a great effort by the attackers.

Automated PsyOps

One of the principal factors for the success of PsyOps operation is the possibility of automating the processes for information diffusion and management of the sources. To do it, entities that conduct psychological operations start from the assumption that the Internet, and in particular a social network, lacks proper management of users' digital identities; this means that it is possible to adopt various techniques to pursue the above goals.

The U.S. military is considered one of the most active entities in developing automated software that can manipulate social media sites by using fake identities to influence internet conversations and discussions, and to spread pro-American propaganda. The Guardian reported that a Californian corporation, Ntrepid, has been awarded a contract ($2.76m contract) with United States Central Command (Centcom), which oversees U.S. armed operations in the Middle East and Central Asia, to design an "online persona management service" that will allow one U.S. serviceman to control up to 10 separate identities based all over the world.

"0001- Online Persona Management Service. 50 User Licenses, 10 Personas per user.

"Software will allow 10 personas per user, replete with background, history, supporting details, and cyber presences that are technically, culturally, and geographically consistent. Individual applications will enable an operator to exercise a number of different online personas from the same workstation and without fear of being discovered by sophisticated adversaries. Personas must be able to appear to originate in nearly any part of the world and can interact through conventional online services and social media platforms. The service includes a user-friendly application environment to maximize the user's situational awareness by displaying real-time local information. "

The Centcom contract stipulates that each fake online persona must have a convincing background, history, and supporting details, and that up to 50 U.S.-based controllers should be able to operate false identities from their workstations "without fear of being discovered by sophisticated adversaries."

The intent of the U.S. military was to influence global sentiment to create a false consensus in online discussion. A Centcom spokesman, Commander Bill Speaks, clarified that the software will not address U.S. audiences; the system is able to produce activities in different languages, including Arabic, Farsi, Urdu, and Pashto.

"The technology supports classified blogging activities on foreign-language websites to enable Centcom to counter violent extremist and enemy propaganda outside the U.S."

Office Centcom was not interested in U.S.-based web sites, in English, or any other language, and it declared that isn't analyzing Facebook or Twitter, but recent revelations on PRISM and other surveillance programs raise numerous doubts of the official declarations.

"Once developed, the software could allow U.S. service personnel, working around the clock in one location, to respond to emerging online conversations with any number of coordinated messages, blog posts, chatroom posts, and other interventions. Details of the contract suggest this location would be MacDill air force base near Tampa, Florida, home of U.S. Special Operations Command," posted The Guardian.

The contract appears to be part of the program dubbed Operation Earnest Voice (OEV), which was first developed in Iraq by U.S. military with the purpose of conducting psychological warfare operations against al-Qaida on-line supporters. The program is reported to have expanded into a $200m program and, according to intelligence experts, it has been used against jihadists across Pakistan, Afghanistan, and the Middle East.

Gen Mattis said: "OEV seeks to disrupt recruitment and training of suicide bombers; deny safe havens for our adversaries; and counter extremist ideology and propaganda." He added that Centcom was working with "our coalition partners" to develop new techniques and tactics the U.S. could use "to counter the adversary in the cyber domain."

On February 5, 2011, Anonymous compromised the HBGary website, disclosing tens of thousands of documents from both HBGary Federal and HBGary, Inc., including company emails. The hack revealed that Bank of America approached the Department of Justice over concerns about information that WikiLeaks had about it. According an article published by The New York Times, the Department of Justice in turn referred Bank of America to the lobbying firm Hunton and Williams, which connected the financial institution with a group of information security firms collectively known as Team Themis that includes HBGary, Palantir Technologies, Berico Technologies and Endgame Systems.

Their mission was to undermine the credibility of WikiLeaks and the journalist Glenn Greenwald; the hack also revealed that Team Themis was developing a "persona management" system for the United States Air Force that allowed one user to control multiple online identities ("sock puppets") for commenting in social media spaces. It is the same system we have already introduced.

Socialbots and Persona Management Software

Socialbots are considered the most efficient way to control social media in an automated way, trying to influence the common sentiment about specific topics. Scientists believe that socialbots are already expanding their presence in social media. During 2012, the number of Twitter accounts topped 500 million, but according some researchers nearly 35 % of the average Twitter user's followers are real people. The Internet traffic generated by nonhuman sources is more than 50%, mainly produced by bots or M2M systems.

On occasion of the Russian parliamentary election in 2011, thousands of Twitter bots operated for several months to create specifically crafted content. Every day the principal social networks were flooded by messages targeting the political opponents and the anti-Kremlin activists, aiming to drown them out. Researchers say similar tactics have been used more recently by the government in Syria. Same tactic has been followed by officials from Mexico's governing Institutional Revolutionary Party that used bots to sabotage the party's critics.

Botnets are a primary choice for the attackers, who could use these malicious architectures for the following purposes:

- By replacing the identity of targeted individuals, hackers could infect victims' systems, using spear phishing attacks to acquire their complete control. State-sponsored hackers could use zero-day exploits to hide their campaign; once they have acquired control of victims, they could impersonate another user for various purposes, from intelligence to social engineering. These kinds of operation are considerably high risk and therefore are not common for ordinary PsyOps; it is, in fact, very easy to detect an attack on a specific legitimate account that is used to spread specific information.

- More feasible is the use of botnets for identity spoofing. Attackers could create a network of fake profiles which do not correspond to any existing individual to operate conditioning on a large scale. The botnet could be designed to simulate the dialogue between those individuals on specific topic within popular forums and social networks; in this way it is possible to raise the level of attention on various issues.

Social media websites attract the interest of ordinary people and also of malware creators, who design malicious code, dubbed socialbots, which also grew along with social networking platforms. Social botnets are malicious architectures composed of a considerable number of fake profiles (e.g., millions of fake profiles) totally managed by machines. The bot agents are capable of interacting among themselves and with real users in realistic mode; the result of their operation is the changing the "sentiment" on specific topic by conducting a "conversation " on a large-scale as well as altering the overall social graph, and to preclude meaningful correlations on the data. Social botnets could be used also for other purposes, such as the social graph fuzzing, the intentional association with groups and people who do not have to deal with their own interests and relationships with the intent of introducing "noise" into their social graphs. Socialbots are very attractive also for cyber criminals and state-sponsored hackers. They represent a privileged channel for spreading malware, stealing passwords, and spreading and posting malicious links through social media networks.

Security researchers are convinced that socialbots could be used to sway elections, to attack governments, or to influence the stock market, and probably many recent events have been already influenced by the use of such malicious architecture.

Recently computer scientists from the Federal University of Ouro Preto in Brazil announced that the popular journalist on Twitter, Carina Santos, was not a real person but a botnet that they had created. What is very surprising is that, based on the circulation of her tweets, a commonly used ranking site, Twitalyzer, ranked this account as having more online "influence" than Oprah Winfrey profile.

Early in 2013, Microsoft detected a malware called Trojan:AutoIt/Kilim.A., a Trojan specifically designed to target the Google Chrome browser.

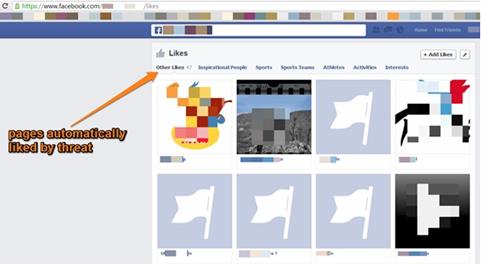

"The Trojan may be installed when an unsuspecting user clicks on a shortened hyperlink that redirects to a malicious website. The website masquerades as a download site for legitimate software, and tricks the user into downloading and executing Kilim. Upon successful execution, Kilim disables user account controls (UAC) and adds an auto-start entry in the system registry to survive the reboot. It then proceeds to download two malicious Chrome browser extensions," Microsoft revealed.

The installed extensions are able to gain access to the victim's social networking sites and perform actions in place of legitimate users. It's clear that a similar behavior could be exploited to build a network of agents that promote specific topics in an automated way, using existing and legitimate user accounts.

Figure 1 - Trojan:AutoIt/Kilim. An operation

A Zeus Trojan variant has also recently been used to hit social media. Using this popular malware, attackers could increase the rate of specific posts and spread a series of links to which the bots add thousands of likes. These variants are able to automatically post content, such as photos and comments, and to increase the number of followers influencing the attacked social media on specific topics. Using the Zeus Trojan, hackers could conduct the essential operations for a psychological operation by creating various fake profiles having a specific role in the fake network. Some nodes, in fact, will post contents simulating a discussion and thousands of others will post an unlimited amount of likes and followers for the first group of profiles, fueling the interest in a particular discussion by using comments and images.

This use of malicious code is also increasing in popularity in the cyber ecosystem; the generation of these fake items on social media to increase visibility is becoming a profitable business. On average, 1000 Instagram followers can be bought for $15 while likes go for $30. Social media customers are willingly paying thousands of dollars on similar issues and it is normal to expect that, as the rise of social media continues, the demand will rise.

"The current version of Zeus is a modified one which attacks all infected computer running on a network and forces them to like or follow pages. Furthermore, it also calls the users to install spammy apps and plug-ins."

Usually socialbots can be more or less complex: They are able to query an internal DB that is constantly updated and containing the latest news on current events and on facts of major importance for the topic that will be addressed. The botnet structure is the optimal solution, the last generation of P2P botnets and Tor-based botnets are practically impossible to track. The botmaster could control hundreds of thousands of infected machines that could concur to promote a series of discussions on various social media. Almost any social medium could be compromised by the psychological operations conducted through these architectures; to the untrained eye, it would appear that a plethora of users are confronted on issues of various kinds when, in fact, intelligence services are working to disseminate content that will influence global sentiment.

Paradoxically, the main problem for the conduct of these operations is not the control of thousands of bots around the planet to compromise as many systems as possible, but state-sponsored actors use to exploit zero-day vulnerabilities to do this dirty work.

Also, the cybercrime industry is interested in the capabilities of socialbots, mainly for the possibility of selling Twitter followers for a price or to involve victims in fraudulent activities, such as stealing passwords or spreading other malware for a price.

The "Infiltration"—a Socialbot Experiment

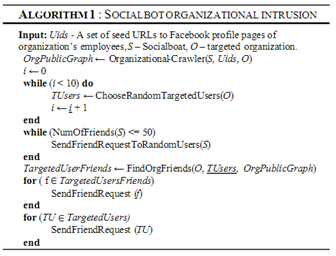

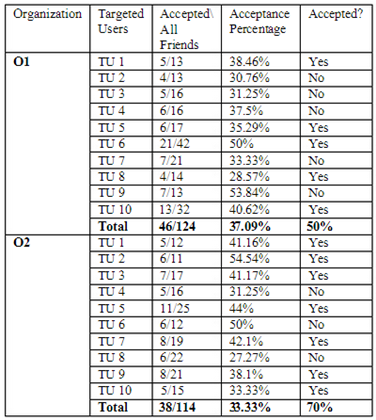

Researchers Aviad Elishar, Michael Fire, Dima Kagan, and Yuval Elovic published an interesting paper, titled "Homing Socialbots: Intrusion on a specific organization's employee using Socialbots," that presents a method for infiltrating specific users in targeted organizations by using organizational social network topologies and socialbots. To measure the efficiency of the method, the researchers decided to target technology-oriented organizations, for which employees should be more aware of the dangers of exposing private information on social media. The scope of the experiment is to induce social network users to accept a socialbot's friend; the acceptance is also called infiltration.

Figure 2 - Socialbot organizational intrusion algorithm

The infiltration exposes users' information, including their workplace data. The researchers used socialbots in two phases:

- Information-gathering phase, the reconnaissance of Facebook users who work in targeted organizations.

- Target identification, the random choice of 10 Facebook users from every targeted organization. The 10 users were chosen to be the specific users from targeted organizations that the attackers would like to infiltrate.

The socialbots were instructed to send friend requests to all specific users' mutual friends who worked or work in the same targeted organization to collect as many mutual friends as possible to increase the probability that bot friend requests will be accepted by the targeted users.

The results were surprising: The method was successful in from 50% to 70% of the cases. The infiltration could be conducted simply to allow the attacker to gather access to personal and valuable information or to establish a first link to exploit for further psychological operation. The infiltration could be considered a strategic part of the conduction of PsyOps.

Figure 3 - Targeted users - summary results

PsyOps Mitigation

Mitigation of automated psychological operation is a very complex mission. The technologies have provided an impressive boost for these types of operation; the instantaneity of messages, anonymity, and a wide audience are the primary keys to their success.

DARPA (Defense Advanced Research Projects Agency) has started the social media in strategic communication (SMISC) program to develop a new science of social networks built on an emerging technology base.

The general goal of the SMISC program is to develop a new science of social networks built on an emerging technology base. DARPA is working for the development of a new generation of tools to support the efforts of human operators to counter misinformation or deception campaigns with truthful information. The program has been sustained to detect any action induced by external agents to shape global sentiment or opinion in information generated and spread through social media. For the scope, security and media researchers are joining their effort to recognize specific patterns that could reveal the operation of automated botnets.

"If successful, they should be able to model emergent communities and analyze narratives and their participants, as well as characterize generation of automated content, such as by bots, in social media and crowd sourcing."

To detect ongoing PsyOps, it is necessary to analyze large amounts of data from social media networks. Security researchers could work on two distinct fronts, detecting the flow of information aimed at influencing the global sentiment on a specific set of topics and trying to discover evidence of automated software (e.g., bot agents) that work to poison conversations on principal social media. Both paths are very hard to follow. It requires a meaningful computational capability and the definition of adaptive models able to detect the various phases of a psychological operation such as the "infiltration."

Probably one of the most precious contributions to the definition of defensive models is provided by the offensive approach to social media: The same cyber units responsible for the detection of PSYOPs operated by foreign actors are in charge to define a new generation of software able to elude actual automated systems for the detection of the operation.

Conclusions

Psychological operations have been always considered a crucial option for the military. The large diffusion of social media gives to governments the possibility of using a new powerful platform for the spread of the news and propaganda in a context of information warfare.

The aggressor may exploit social media to destabilize a company or any other aspect of modern society: Diffusing fake news of an attack on the White House, the hacktivist group Syrian Electronic Army caused a serious temporary problem with the New York Stock exchange. It's clear that someone could benefit from this event.

The principal change introduced with automated PsyOps is that potentially every actor could control information diffusion on media: Cyber criminals, private intelligence contractors, terrorists, and hacktivists could influence global sentiment with artifacts documenting and disseminating information with convincing and persuasive data. This aspect is crucial and represents a serious menace to contemporary information society.

It is easy to forecast an increase of PsyOps based on social media in the next year, the information war was begun many years ago and governments must address this menace with a proper cyber strategy to avoid serious risks and crisis.

PsyOps need not be conducted by nation states; they can be undertaken by anyone with the capabilities and the incentive to conduct them and, in the case of private intelligence contractors, there are both incentives (billions of dollars in contracts) and capabilities.

References

https://resources.infosecinstitute.com/social-media-use-in-the-military-sector/

http://www.psywar.org/terminology.php

http://publicintelligence.net/nato-psyops/

http://wiki.echelon2.org/wiki/Operation_Earnest_Voice

http://www.darpa.mil/Our_Work/I2O/Programs/Social_Media_in_Strategic_Communication_%28SMISC%29.aspx

http://www.theguardian.com/technology/2011/mar/17/us-spy-operation-social-networks

http://blogs.technet.com/b/mmpc/archive/2013/06/11/rise-of-the-social-bots.aspx

http://www.nytimes.com/2013/08/11/sunday-review/i-flirt-and-tweet-follow-me-at-socialbot.html

http://hackread.com/zeus-trojan-targets-facebook-instagram-twitter-youtube-linkedin/

http://opinionator.blogs.nytimes.com/2013/06/14/the-real-war-on-reality/?_r=1

http://news.thewindowsclub.com/social-bots-are-on-the-rise-and-marching-says-microsoft-63286/

http://blogs.technet.com/b/mmpc/archive/2013/06/11/rise-of-the-social-bots.aspx

http://publicintelligence.net/nato-psyops-policy/

http://it.wikipedia.org/wiki/Social_Network_Poisoning

FREE role-guided training plans

http://www.markusstrohmaier.info/documents/2013_WebSci2013_Socialbots_Impact.pdf