HTTP/2: Enforcing Strong Encryption as the De Facto Standard

HTTP Protocol, which serves as the communication channel for HTTP requests and responses on the Web, has received its first major revision since introduction of HTTP 1.1 in 1999, the new standard brings faster web page rendering and better encryption standards. In this article, we will be discussing protocol concepts, specifications, terminology, and how HTTP/2 improves web security.

Mark Nottingham, chairman of the IETF working group behind creating the standards, said, "Many people believe that the only safe way to deploy the new protocol on the 'open' Internet is to use encryption; Firefox and Chrome have said that they'll only support HTTP/2 using TLS."

Introduction

In a world where optimizing site response time produces real financial impact, the speed that a website loads is a key business metric that can't be ignored. HTTP2 will be transparently upgradable, if your browser and the server it is connected to both support it, the connection will be upgraded to HTTP2. In addition, your browser will remember the server's support for HTTP2 for next time.

HTTP/2 is not a new re-write of the HTTP protocol, since the current HTTP protocol works well and is used by millions of devices. Introducing a completely new protocol would be very complex and nearly impossible. Basic principles of the HTTP semantics would remain the same such as HTTP Verbs, HTTP Methods, Headers, and cookies. It modifies the way HTTP request and responses are sent over the wire. Therefore, HTTP/2 will definitely be an upgrade rather than a rewrite. The protocol has already been implemented by several servers like Akamai, Google and Twitter's main sites. The protocol brings in improvements such as:

- Faster webpage rendering

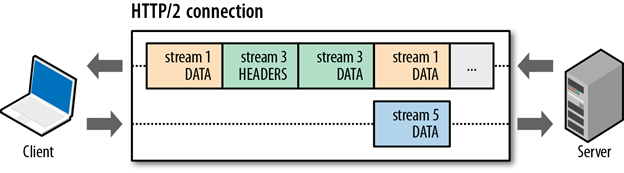

- Implements multiplexing: Multiple requests can be made at once and resources can be delivered whenever they are ready, not necessarily in a particular order or even only after they've been requested. Multiplexing is achieved by the introduction new binary framing layer in HTTP/2.

- HTTP/2 is binary, instead of textual: Binary protocols are more efficient to parse, more compact "on the wire", and most importantly, they are much less error-prone, compared to textual protocols like HTTP/1.x, because they often have a number of affordances to "help" with things like whitespace handling, capitalization, line endings, blank links. This also means that the protocol is not usable with telnet, but plugins are already available for packer analyzers like Wireshark.

- Header field compression to reduce overhead

- Improves web security: HTTP/2 defines the profile of TLS that is required, which includes the version (TLS 1.2), a cipher suite blacklist, and extensions used.

Disadvantages in HTTP 1.1 protocol:

HTTP was not particularly designed for latency; the spdy-whitepaper highlights the following as the major drawbacks of HTTP protocol that inhibits optimal performance.

-

Single request per connection: HTTP can only fetch one resource at a time (HTTP pipelining helps, but still enforces only a FIFO queue); a server delay of 500ms prevents reuse of the TCP channel for additional requests. Browsers work around this problem by using multiple connections. Since 2008, most browsers have finally moved from two connections per domain to six.

- Exclusively client-initiated requests: In HTTP, only the client can initiate a request. Even if the server knows the client needs a resource, it has no mechanism to inform the client and must instead wait to receive a request for the resource from the client.

- Uncompressed request and response headers: Request headers today vary in size from ~200 bytes to over 2KB. As applications use more cookies and user agents expand features, typical header sizes of 700-800 bytes are common. For modems or ADSL connections, in which the uplink bandwidth is low, this latency can be significant. Reducing the data in headers could directly improve the serialization latency to send requests.

- Redundant headers: In addition, several headers are repeatedly sent across requests on the same channel. However, headers such as the User-Agent, Host, and Accept* are generally static and do not need to be resent.

- Optional data compression: HTTP uses optional compression encodings for data. Content should always be sent in a compressed format.

HTTP persistent connection

HTTP Persistent connection or Connection-reuse is the idea of using a single TCP connection to send and receive multiple HTTP requests and responses, also enables HTTP pipelining. HTTP Pipelining is a technique in which multiple HTTP requests are sent on a single TCP connection without waiting for the corresponding responses. It is supported in HTTP/1.1, and not in HTTP/1.0. As explained earlier, the server must send its responses in the same order that the requests were received, so the entire connection remains FIFO (First-in-First-Out).

What is SPDY Protocol?

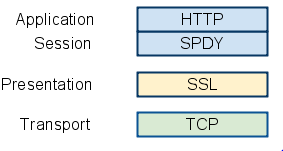

SPDY protocol was experimental protocol primarily developed by Google during 2009 to reduce web page load latency by targeting a 50% reduction in page load time and improve web security. They achieved this by introducing multiplexing, request prioritization and header compression techniques. The SPDY specification was adopted as a starting point for the new HTTP/2 protocol. SPDY adds a session layer atop of SSL that allows for multiple concurrent, interleaved streams over a single TCP connection.

Basic features of SPDY include:

- Multiplexed streams

SPDY allows for unlimited concurrent streams over a single TCP connection. Because requests are interleaved on a single channel, the efficiency of TCP is much higher: fewer network connections need to be made, and fewer, but more densely packed, packets are issued.

- Request prioritization

SPDY implements request priorities: the client can request as many items as it wants from the server, and assign a priority to each request. This prevents the network channel from being congested with non-critical resources when a high priority request is pending.

- HTTP header compression

By the year 2012, SPDY protocol was supported in Chrome, Firefox, and Opera. It also started to become the de facto standard through growing industry adoption like Google, Twitter, and Facebook. SPDY compresses request and response HTTP headers, resulting in fewer packets and fewer bytes transmitted. TCP's Slow Start mechanism, which paces packets out on new connections based on how many packets have been acknowledged, effectively limiting the number of packets that can be sent for the first few round trips.

In comparison, even mild compression on headers allows those requests to get onto the wire within one round trip, perhaps even one packet. This overhead is considerable, especially when you consider the impact upon mobile clients, which typically see round-trip latency of several hundred milliseconds, even under good conditions.

Birth of HTTP/2 Protocol

During March 2012, HTTP Working Group (HTTP-WG) initiated call for proposal for HTTP/2 that used SPDY specification as a starting point for the new protocol. Over the next few years, SPDY and HTTP/2 would continue to coevolve in parallel, with SPDY acting as an experimental branch that was used to test new features and proposals for the HTTP/2 standard. SPDY offered a route to test and evaluate each proposal before its inclusion in the HTTP/2 standard.

In early 2015, Google Chrome team officially announced that they plan to remove support for SPDY in early 2016 and strongly encouraged server developers to move to HTTP/2 and ALPN (Application-Layer Protocol Negotiation). ALPN is a TLS extension supported by HTTP/2 to negotiate which protocol to be used over a secure connection.

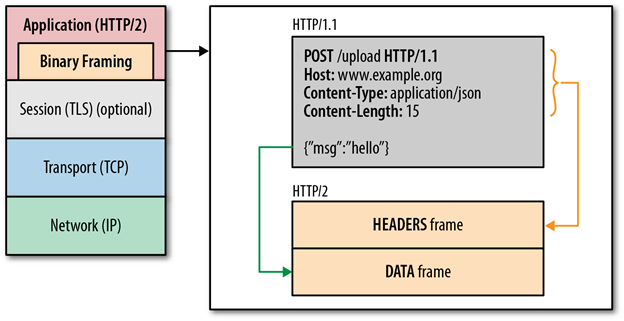

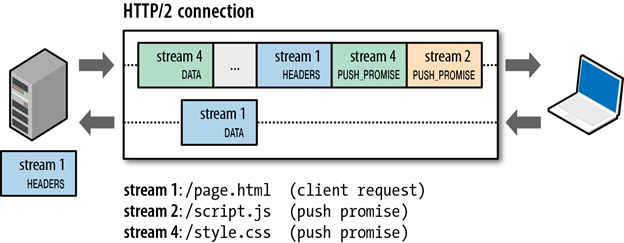

HTTP/2 terminology includes stream and frames. A stream is a bidirectional flow of bytes within an established connection, which may carry one or more messages. Frame is a smallest unit of communication in HTTP/2, each containing a frame header, which at a minimum identifies the stream to which the frame belongs.

HTTP/2 introduces the new binary framing layer that defines the transfer of encapsulated HTTP messages between the client and the server. Unlike the delimited plaintext, HTTP/1.x protocol, all HTTP/2 communication is split into smaller messages and frames encoded in binary format. Therefore, both client and server must support and use the new binary encoding mechanism to understand each request and response.

This also means, that an HTTP/1.x client won't understand an HTTP/2 only server, and vice versa. Textual or ASCII protocols may lead to parsing and security errors due to varying termination sequences and optional whitespaces. HTTP/2 breaks down the HTTP protocol communication into an exchange of binary-encoded frames, which are then mapped to messages that belong to a particular stream, and all of which are multiplexed within a single TCP connection. This foundation enables all other features and performance optimizations provided by the HTTP/2 protocol.

Request Prioritization

Not all resources have equal priority when rendering a page in the browse, the HTML document itself is critical to construct the DOM; the CSS is required to construct the CSSOM; both DOM and CSSOM construction can be blocked on JavaScript resources and remaining resources, such as images, are often fetched with lower priority.

To accelerate the load time of the page, all modern browsers prioritize requests based on type of asset, their location on the page, and even learned priority from previous visits, e.g.: if the rendering was blocked on a certain asset in a previous visit, then the same asset may be prioritized higher in the future.

With HTTP/1.x, the browser has limited ability to leverage above priority data, the protocol does not support multiplexing, and there is no way to communicate request priority to the server. Instead, it must rely on the use of parallel connections, which enables limited parallelism of up to six requests per origin. As a result, requests are queued on the client until a connection is available, which adds unnecessary network latency.

HTTP/2 resolves these inefficiencies, request queuing and head-of-line blocking is eliminated because the browser can dispatch all requests the moment they are discovered, and the browser can communicate its stream prioritization preference via stream dependencies and weights, allowing the server to further optimize response delivery. A stream dependency within HTTP/2 is declared by referencing the unique identifier of another stream as its parent, if omitted the stream is said to be dependent on the "root stream". Declaring a stream dependency indicates that, if possible, the parent stream should be allocated resources ahead of its dependencies. Streams that share the same parent can be prioritized with respect to each other by assigning a weight to each stream

HPACK Compression

SPDY initially addressed this redundancy by compressing header fields using the DEFLATE format, which proved very effective at efficiently representing the redundant header fields. However, that approach exposed a security risk as demonstrated by the CRIME attack, CRIME (Compression Ratio Info-leak Made Easy) exploits a vulnerability of TLS Compression like GZIP stream compression, which results in recovering sensitive information like the authentication cookie. With CRIME, it's possible for an attacker who has the ability to inject data into the encrypted stream to "probe" the plaintext and recover it. Since this is the Web, JavaScript makes this possible, and there were demonstrations of recovery of sensitive information like cookies and authentication tokens using CRIME for TLS-protected HTTP resources.

As a result, HTTP/2 does not use GZIP compression and introduces a new header-specific compression called HPACK compression.

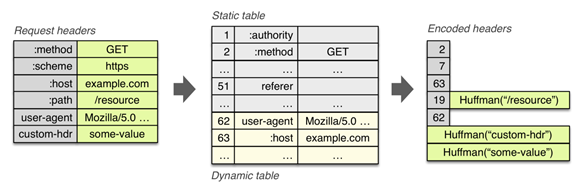

- It allows the transmitted header fields to be encoded via a static Huffman code, which reduces their individual transfer size.

- It requires that both the client and server maintain and update an indexed list of previously seen header fields (i.e. establishes a shared compression context), which is then used as a reference to efficiently encode previously transmitted values.

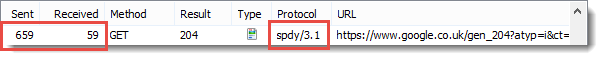

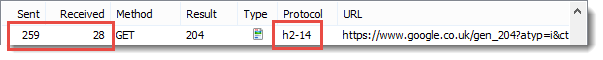

A comparison for size of request and response headers in SPDY/3.1 and HTTP/2 protocol shows that HPACK compression provides a significantly smaller header sizes when compared to DEFLATE compression used by SPDY protocol

Static table is defined in the specification and provides a list of common HTTP header fields that all connections are likely to use (e.g. valid header names), the dynamic table is initially empty and is updated based on exchanged values within a particular connection. As a result, the size of each request is reduced by using static Huffman coding for values that haven't been seen before, and substitution of indexes for values that are already present in the static or dynamic tables on each side.

Server Push

HTTP/2 enables the server to send multiple responses for a single client request. That is, in addition to the response to the original request, the server can push additional resources to the client, without the client having to request each one explicitly. The protocol breaks away from the strict request-response semantics and enables one-to-many and server-initiated push workflows that open up a world of new interaction possibilities both within and outside the browser. The advantages of a pushed data includes, caching of pushed resources by the client. Reuse across different pages etc.

Conclusion:

HTTP/2 may not be an immediate success, but the standard is well received by experts in the industry. HTTP/1.x will be around for at least another decade, and most servers and clients will have to support both HTTP/1.x and HTTP/2 standards. Though HTTP/2 does not require use of TLS, many implementations have stated that they will support HTTP/2 only over an encrypted channel. HTTP/2 also emphasizes on the usage of strong encryption like the usage of TLS v1.2 or higher, disabling TLS compression, must support ephemeral key exchange size of at least 2048 bits for cipher suites that uses DHE and 224 bits that uses ECDHE (Elliptic Curve Diffie-Hellman), blacklisting weak cipher suites.

References:

http://mobiforge.com/design-development/getting-ready-for-http-2-0

https://www.chromium.org/spdy/spdy-whitepaper

http://chimera.labs.oreilly.com/books/1230000000545/ch12.html

11 courses, 8+ hours of training

https://blog.httpwatch.com/2015/01/16/a-simple-performance-comparison-of-https-spdy-and-http2/