Exploiting Public Information for OSINT

Open source intelligence is an act of finding the information using publicly available sources; these sources could be anything, for instance; newspaper, business directories, annual reports, etc. And the scope of OSINT is not only limited to the Internet. The core responsibility of a professional working on OSINT is to connect the dots (data acquired from many sources) which seem difficult and time taking process, only if we don't have the automatic tools and scripts.

Search engines always play a significant role in finding relevant information; thanks goes to advanced operators and query management. Apart from search engines or passive connection, sometimes an attacker has to establish an active connection with the target; in this article, we will discuss numerous tools, tools that use both active and passive techniques to find the information about a company, person and even about a product.

What should you learn next?

People Search Engines

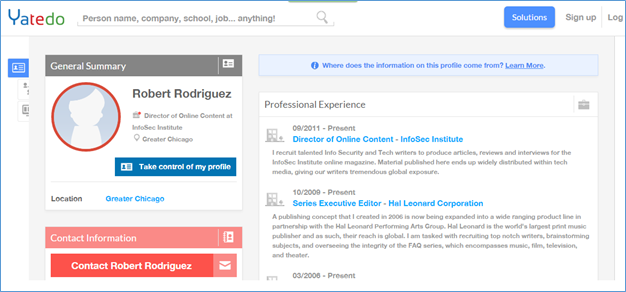

At times you want to find the details of a person, this person may belong to an organization you are investigating, may be a CEO, Director or any other key player that can contribute in giving a potential harm to that specific organization. No matter what the objectives are, you can always use people search engine to find the details of a person. A quick search on yatedo.com reveals a profile of an employee:

Following are the steps through which one can get the information about the employees of any organization.

Step1: Prepare the list of employees; LinkedIn, Xing, Yatedo and other professional networking websites help in preparing the list of the employees.

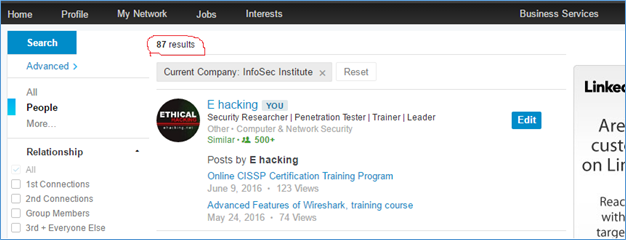

Figure 2

Use the powerful feature of Linkedin to filter the search result; figure 2 shows that there are 87 individuals currently working at Infosec Institute. List all of the employees with their industry, job function and seniority level because this information helps to launch an attack, and luckily all of this information are available on LinkedIn. Wait a minute; LinkedIn restricts access based on previous search history and connection level.

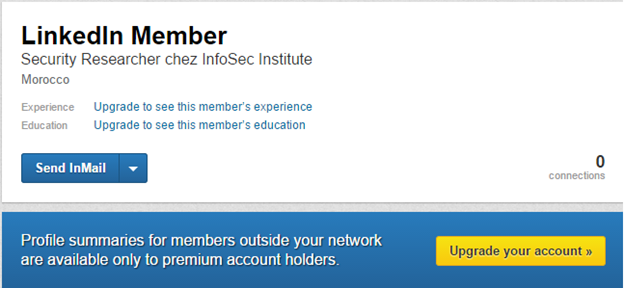

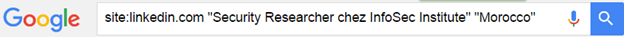

Premium account is the solution, but this is not the only solution. The picture mentioned above shows that even restricted access gives enough information to create a Google dork and get access to the public profile:

And it lets you in, using a private window or logging in with a different account always help:

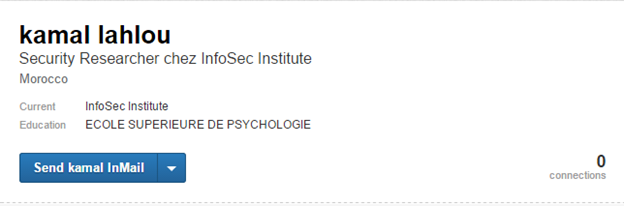

Step2: Once the basic information has been identified, harvest them and select the key players (depends on your objective, you can target an HR professional to get further information about an individual or you can target marketing manager to help him launching the next campaign). Once the key players have been identified, try searching their personal details:

You need to understand the life your target is living, what he does, when he does and with whom he hangouts, his daily routine and weekend plan as well. Social networking websites do most of the part here; you can get the education and work history from Linkedin; while interest, hobbies, family and relationship can be seen on Facebook and Twitter.

Spock.com service result:

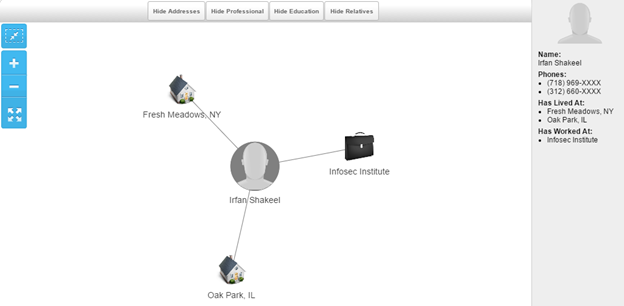

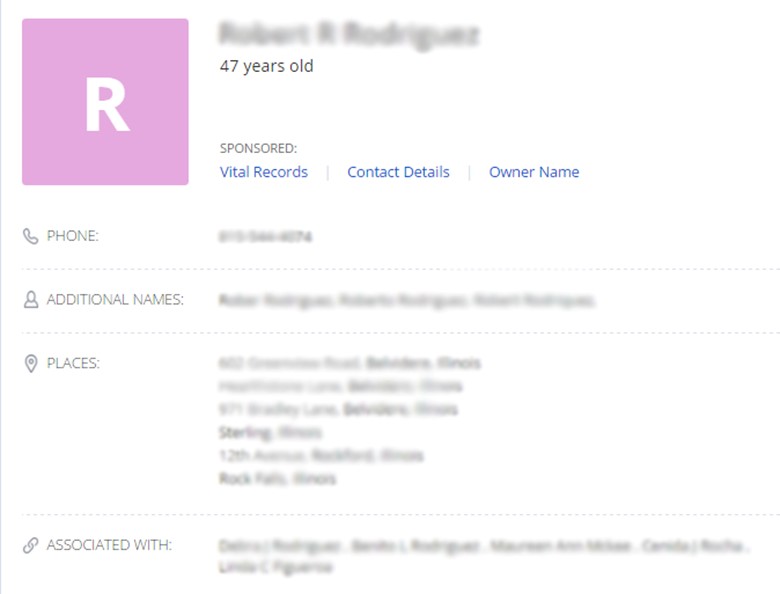

It shows the places live at, employer name and probably contact information. Let's go deep, here is someone who travels a lot and lived in many places, working somewhere and have relationships (spouse and children).

The manual way of verifying the relationship detail is actively to monitor the social media profiles of the target and his/her relatives as well. Pipl.com also gives the detail information of the searched profile:

A directory based attack can be launched to guess the email addresses, for example, attacker contacts an employee and gets the email addresses of other employees; a social engineering trick works here. Once he understands the pattern, he can guess the rest of the email addresses. For example, using the first and the last name seems a common practice in the organizations; firsname.lastname@domain.com

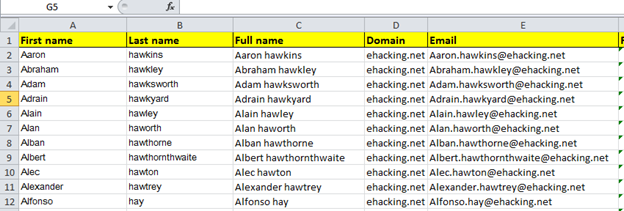

Us an excel sheet to automate the task:

Formula: =CONCATENATE(firstname,".",lastname,"@",domain) (replace first name, last name and domain with their respective column ID).

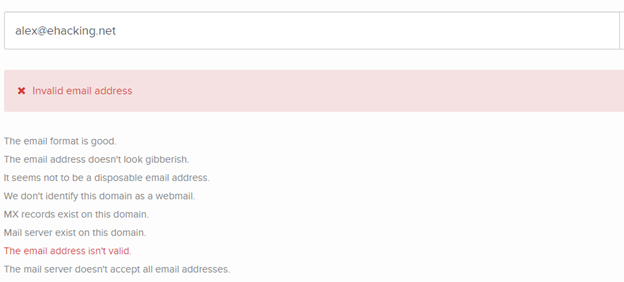

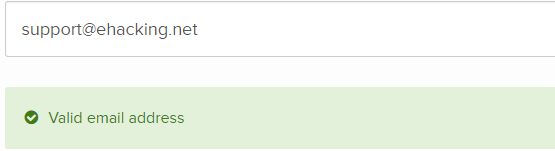

The next step is to verify the details, manually checking the mail server working, but the services like emailhunter execute the job efficiently:

However,

Get the premium account and upload the list harvested before, the tool will give you the output with the correct email addresses.

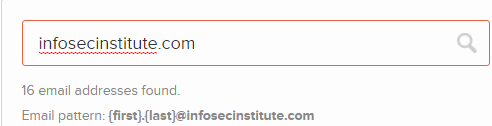

This service not only verifies the email, but it also discovers them with the pattern.

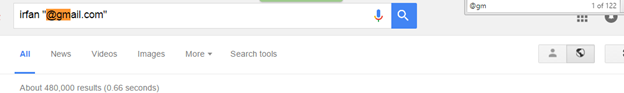

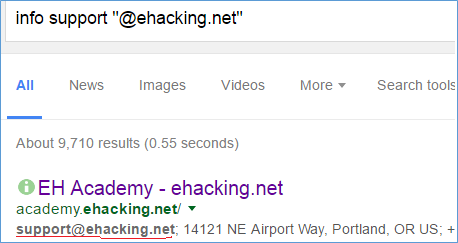

A simple query on Google search shows 100 email addresses per page:

Spammers also use the same techniques to scrape the email addresses from search engine, but getting the random email addresses is not the objective, so let's tweak it a little:

Plain text email, a mistake, but attacker gets the advantage. To name some people and company search engines:

- 192.com (for the UK)

- Searchbug.com

- Companycheck.co.uk (for the UK)

- Freecarrierlookup.com (phone carrier check)

- Phonebookoftheworld.com

- Zoominfo.com

- Anywho.com

- Europages.co.uk (Europe)

- Connect.data.com

- Wayp.com (International)

- Investigativedashboard.org

Exploiting the Technology Infrastructure for the Information:

A website is not the front page that only carries information about an organization, the website itself based on many factors and it carries much important information that could be used against its own organization.

Let's analyze the technology infrastructure starting with the hardware search:

- Shodan.io

- censys.io

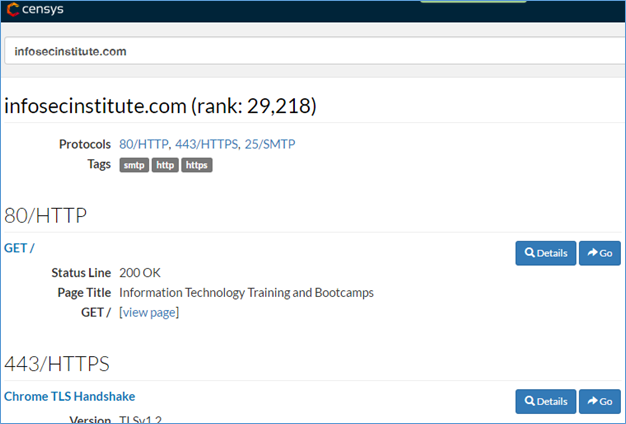

The basic censys scan shows the listening ports and its associated services with certs.

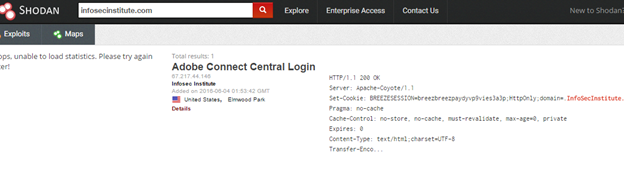

Shodan discovered another service running on the server:

Both Shodan and Censys discover the internet connected devices, their services, and open ports. Apart from the running services, we need to find out the server details as well. The following services do the same:

- centralops.net

- network-tools.com

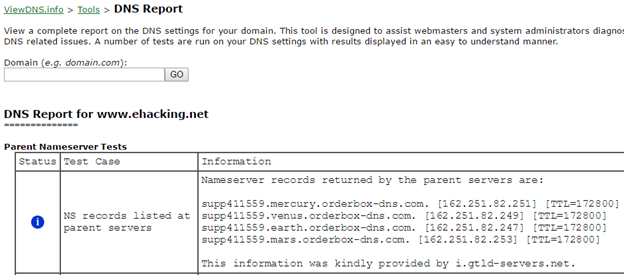

- viewdns.info

What information to look for?

- DNS records

- Nameserver details

- Netblock

- Server IP address

- MX, CNAM and SOA records

A DNS report using viewDNS shows much interesting information about the technology infrastructure, maintain logs of the information that you get during the investigation process, this information helps to solve a problem as well as to launch an attack.

Domain Dossier using Centralops gives the complete picture of the infrastructure including the server IPs, netblock, IP WHOIS, network WHOIS record with contact details, DNS analysis, and running services. This information is enough to understand what type of targeted network it is and what services they are using. Points to note:

- Is this a shared server or private one? And are they hosting any other website on the server? (use the reverse IP lookup to find out)

- Are the IPs blacklisted or contain any malware? (use IPvoide.com to test)

- Is there anything suspicious? Domain hijacking? (check DNS record)

- What about the sub-domains and mail servers? See what this organization is hosting besides their main website. For example, private software, you might be interested in.

Keep in mind that the objective is not just to acquire the information, but to process them and use them to get some meaning full output.

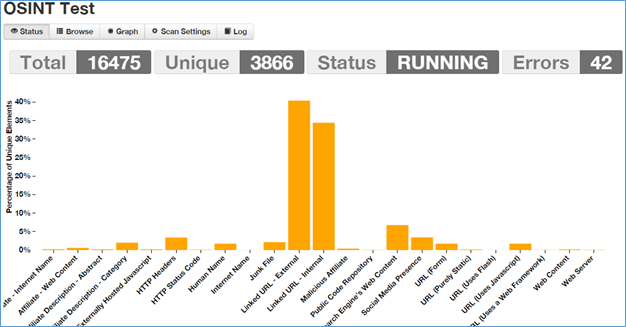

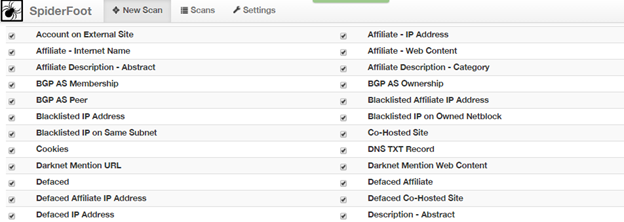

Automating the OSINT Process using Spiderfoot

Spiderfoot is an open source intelligence automation framework; it does many jobs itself by using its robust spiders. It is important while investigating the technology infrastructure; however, it will not help for people searching. It utilizes a shedload of data sources; over 40 so far and counting, including SHODAN, RIPE, Whois, PasteBin, Google, SANS and more.

Some of the key data sources are:

- abuse.ch

- AdBlock

- AlienVault

- Autoshun.org

- malc0de.com

- Onion.City (search engine for dark web)

- PGP Servers (PGP public keys)

- Project Honeypot

- RIPE/ARIN

- And others…

An intense scan shows:

- Human name (real people associated with a domain), interesting

- Web server details

- Malicious affiliation

- Internal and external links

- Externally hosted javascript

- HTTP header details and the server codes

- Social media presence

It has the intensive modules to select from; selection of the modules depend on the need and objective, you may only need to find whether the server is blacklisted or not, or may be previously hacked any record?

FREE role-guided training plans

So this is how an attacker and investigator scan or thoroughly review the information already available on the Internet. The information is already there; you just need to use a smart approach, techniques and tools to find that information.