Application Security for Beginners: A Step-by-Step Approach

Introduction to web application security

The Web has evolved a lot over time. It started with information exchange, and now it is being used for almost everything, be it entertainment, the health industry, home, etc. From a functionality standpoint, the web has evolved a lot. However, taking a step back and looking from a security perspective there is a lot to do. Ideally, security has to be integrated with the functionality from the initial phase itself but if not, plugging the holes is always an option. One of the tempting targets for an attacker to target is the web application, reason – it is exposed to the world, and it falls on the border of both trusted and untrusted boundary. This article explains a methodology of what to look and where to look in an application when performing the vulnerability analysis for web applications.

What do we need in our arsenal?

Proxy - Burp Suite (free version): https://portswigger.net/burp/

- Allows messages to be intercepted for review or silently forwarded

- Keeps a history of requests and responses for later review

- Modify the messages as per need

Foxy Proxy: https://addons.mozilla.org/en-US/firefox/addon/foxyproxy-standard/

- Helps to switch between different proxy settings.

Wappalyzer: https://addons.mozilla.org/en-US/firefox/addon/wappalyzer/

- Know what the website is using.

User agent switcher: https://addons.mozilla.org/en-US/firefox/addon/user-agent-switcher/

- Know the reaction of a web application to different browsers

Fireshot: https://addons.mozilla.org/en-US/firefox/addon/fireshot/

- Capture the web page

Nikto: https://cirt.net/Nikto2

- Server scanning

Vulnerable Web Application: http://www.itsecgames.com/

Vbox: https://www.virtualbox.org/

- Download the application and import that into Vbox.

- Open the application and check the IP "ipconfig."

- Open the IP in the browser

Nmap - https://nmap.org/

- Network mapping

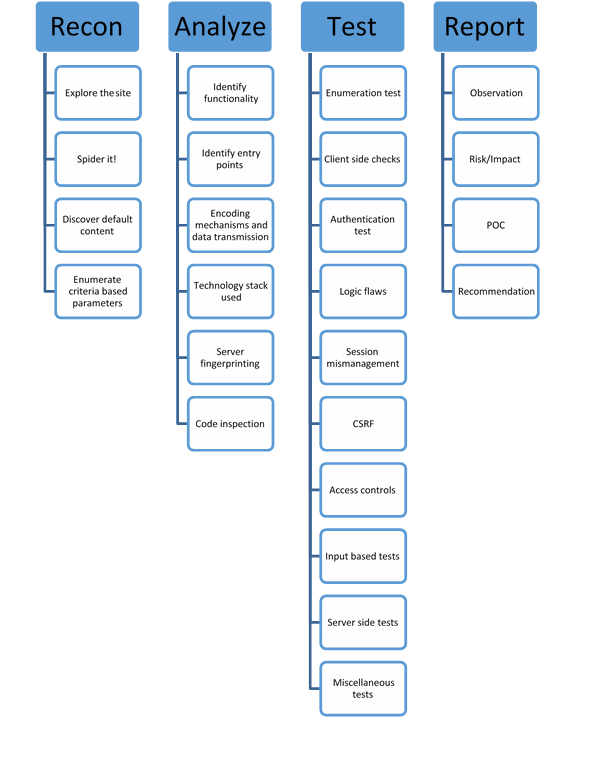

4 Step Checklist Methodology

Recon

-

Explore the site

Explore the site by visiting different links, pages, intercepting the requests and getting a feel of the application, size of the application and UI.

-

Spider it

Spider is a web crawler and helps to index the site and prepare a structure or skeleton. It can be active and passive. An active spider will send requests through all the links of the website and prepare the map. The passive spider will not send any requests but will prepare a map basis which pages you visit a website.

Fig 1: Active spidering a website

-

Discover default content

Discover default content using Nikto, httprint, etc. Check the root directory of the website. Test by typing just the IP address of the website. Is it getting redirected to the login page?

Fig 2: Nikto scan of a website

-

Enumerate Criteria based parameters

Identify the pages where user supplied input is required. Is the request GET or POST? Are there any default parameters passed e.g. debug=true?

Analyze

-

Identify functionality

What is the site intended to do?

- Identify entry points

- Encoding mechanism and data transmission

- Technology stack used

Fig 3: Technology stack used by the website (Wappalyzer)

- Server fingerprinting

- Code Inspection

Check the comments, search for username and passwords in case they are hard coded or any backdoors written by the developers for debug purpose or default accounts left undeleted before pushing the application to production.

Test

Test the application and use the information gathered in the above phases.

-

Authentication test

Is authentication a simple form, multifactor or CAPTCHA based? Check for CAPTCHA repetition and how that is sent in the request. If OTP is present is it sequential or a pattern is present. Check for OTP or link reuse. What account recovery features are present – can the user recover the account himself or does the request goes to the admin? Most sites have an auto recovery mechanism. Do they have security questions? Check the complexity of the questions. Does it send a recovery link? Check if the recovery link is sent to the registered mail or the user is asked to enter the email address manually. If the email is not asked to check the request in the interceptor whether it is going as a hidden parameter. Try getting the reset link to a separate email/phone. Is auto remember enabled for sensitive information page? Inspect the element by right clicking on it. If autocomplete is not off or not mentioned (default is ON) an attacker can get the password or other sensitive information by changing the "type field" in the code from "password" to "text." The attacker will require local access to get the information this way. Are there any password complexity checks in place? If not, try brute forcing or dictionary attacks. Check for the error thrown by the application if the username is incorrect, the password is incorrect, or both are incorrect. Does it explicitly state which one is incorrect? If yes this can lead to valid user enumeration. In case the error is generic, intercept the response and sent that to comparer of burp suite by right clicking it. Check the difference in the response when the username is incorrect, the password is incorrect.

Is the account lockout mechanism in place? Does it explicitly state how many attempts are left before the lockout? Can that be changed by changing the browser or altering the username or restarting the browser?

Fig 4: Harvesting credentials from a local machine when password is remembered

-

Client side checks

Test if you can enter the number in name field or special characters in phone number field. If yes then there can be chances of injection attacks if the input is not handled properly and is by default trusted. Are the credentials being transmitted in the URL? Are they getting encrypted while in transit? Does the site redirect to another site? Are the credentials transmitted again? - plain text or encrypted.

-

Logic flaws

Is the application doing what it is supposed to do? Does a request follow a particular flow e.g. transaction process etc? Try breaking the flow by directly hitting the URL or hit refresh in the last step. Check the response to the application.

-

Session mismanagement

Capture some cookies and try to deduce the logic, send the cookie to burp suite's sequencer to check the entropy. Try to replay the cookie in another session. If it works the application is vulnerable to session fixation attacks. Try logging into the application by hitting the back button post-log-out. Try changing the password and see if the user is asked to log in again or not. Try doing this by being logged in on two different browsers. Are password changes getting reflected instantaneously or the replication requires the user to log out?

-

CSRF (Cross Site Request Forgery)

Check the pages which have forms. Check if the requests have an anti-csrf token. Try to change the referrer to some other value part from what is already present. Is the request is being rendered a 200 OK response? If yes, then the site is vulnerable to CSRF attack.

-

Access controls

Try to figure out the access control mechanism. Is it controlled via a parameter in the request? Is it UI based access control – security through obscurity? If the access is controlled via UI, try to access the page directly via URL e.g. a normal user may not have access to create a user or modify the permissions, but an admin may have these permissions. Hit this page's URL directly via the normal account. Check if the access to the directories is allowed. Check the change the URL from https://test.com/images/test.html to https://test.com/images

-

Input based tests

How is the input handled – Is it getting saved to a database, getting displayed on the screen or sent to the email. If the input is being displayed on the screen then the result of the injection attacks can be seen on the screen, if the input is getting saved in the DB, then the effect can be seen one the value is rendered from the DB.

Try to fuzz the input with the below values to check if the application is vulnerable to injection attempts. Fuzzing can be performed by using Intruder in the burp suite. The fuzzing input scripts can be easily downloaded from the web. Below are some types of injection attacks.

- <h1>Input value</h1>

- '

- ' –

- ;

- <script>alert(1)</script>

- <ScRiPt>alert(1)</SCRipt>

- <img src= "" onerror=alert(1) />

- ;Ping 127.0.0.1;

- | ping 127.0.0.1 |

- Phpinfo()

The input can be encoded using the burp suite encoder itself and check if that works. Check for esoteric languages for injection payloads. You will be amazed on how "+, <,>, [,]" can be used to perform XSS attacks. Convert the input into ASCII code and check the response of the server.

Fig 5: Output before HTML injection

Fig 6: Output after HTML injection

-

Server-side tests

Try to search for any known vulnerability in the server which is being used. Is the server using default credentials? Is the JMX console or web console open for the server? If yes, search for how these can be exploited. Intercept the requests and change the input values which we have identified earlier. In case client side checks are present, they may not be present at the server side. Check the ports and services by running a port scan on the server. Check the web for any known exploits for the services which are running.

-

Miscellaneous tests

Check whether the site uses SSL or TLS and what cipher suites are used. This can be easily checked via https://www.ssllabs.com/ssltest/ or SSL scan tool in Kali Linux, Nmap can also be used to enumerate the cipher suite.

Have you ever visited a site and found that clicking anywhere lead to multiple tabs or a new browser window getting opened? This is a clickjacking attack. The website has other pages with transparency set to 100% overlapped on what is visible on the screen. The effect is that the user clicks on what is visible and the request is sent to other sites. This is called stealing the clicks or clickjacking.

Fig 6: Overlapped web pages with transparency set to 70%

Check if the request has X-Frame header set in the requests. The values can be Allow, Deny, Same origin. Try to generate the error and check if the application reveals anything sensitive. It may be a server side error which is getting rendered without getting sanitized.

If there is a functionality of file upload in the application. Check if you can upload a bigger size file (maybe a 30 MB image). Try to upload a file without an extension of some other extension from what is expected.

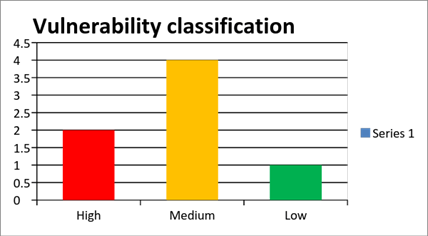

Report

No matter how severe issue has been identified if that has not been documented well enough then no one is going to pay attention to it. A properly documented report must have at least the below along with other details (Executive Summary, Scope, Timelines).

- Observation: What has been identified?

- Risk/Impact: What is the impact? Is it affecting Confidentiality, Integrity, or Availability?

- POC: Proof of concept – Screenshot or Video. Steps to replicate the vulnerability.

- Recommendation: How to fix? Is input validation required? Do access checks need to be worked on?