LAYER 7 DDOS ATTACKS: DETECTION & MITIGATION

Introduction: Initial Detection/Mitigation Challenges

What should you learn next?

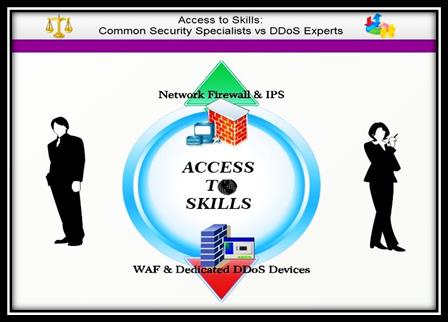

Before we go to the main topic of this article, let us take heed of two factors that exacerbate the buildup of effective defensive powers against Layer 7 DDoS attacks. First, the lack of knowledge about this matter leads an inexperienced IT security staff to take dubious and obviously inappropriate measures (see Diagram 4 below). Over-provisioning of bandwidth is not so expedient when it comes to dealing with application-layer DDoS and their usually low appetite for bandwidth destruction (Verisign, 2012). Instead, the defense mechanisms here should gather strength around other vital resources: memory, processing power, disk space, I/O, and upstream bandwidth (Abliz, 2011).

Second, imagine the typical picture of hectic apps creation from the developer's and vendor's point of view—often a low budget, pressing deadlines, many different tests (e.g., functionality, load, security, etc.). Eventually, it hardly comes as any surprise that the quality of applications themselves, and especially their security inviolability, is maimed (Surace, 2013). These two factors, in concert with others, create weaknesses that black hackers, inter alia, may exploit. In a situation like that, detection and mitigation seem like the only parts of a common but thin bulletproof vest that protects vital organs that the industry needs to function well.

DETECTIÄN

Why Is It Difficult?

Prima facie, there are several reasons to create a covering smoke screen when an assault takes place:

-

Network DDoS detection methods are unable to be on red alert for application DDoS attacks, since they belong to another type of layer (Prabha & Anitha, 2010).

-

Layer 7 DDoS raids based on HTTP requests are most likely not to be detected by TCP anomaly mechanisms because of the existence of successful TCP connections (Prabha & Anitha, 2010).

- One thing leads to another—establishing TCP connections necessitates a provision for legitimate IP addresses and IP packets, a driving force that would handicap the anomaly detection contrivances for IP packets (Prabha & Anitha, 2010).

- The absence of intense traffic spikes is a characteristic. Layer 7 DDoS consumes far less bandwidth, requires lower attack capacity, and the traffic appears benign, leading victims to suspect more trivial reasons such as system failure or application issues (Manthena, 2011).

- To continue the vicious spiral, the relatively calm traffic screen during most application layer DDoS attacks makes it difficult to distinguish them from so-called "flash crowds," i.e., sudden increases in requests made by legitimate users. Moreover, Layer 7 DDoS attacks resemble normal web traffic, as one security expert explains: "Layer 7 attacks are tough to defeat, not only because the incremental traffic is minimal, but because it mimics normal user behavior (Chickowski, 2012, par. 4)."

-

Traffic Monitoring

Not only are DDoS attacks nowadays delivered via infected machines and proxies, but attackers also often utilize highly automated tools. Therefore, an analysis of telltale signs, such as headers imitating normal browser headers, with a high rate of unnatural user agents, is advisable. In addition, the header order may be abnormal and not in accordance with the usual browser behavior (Imperva, 2012).

Yet perhaps the most important thing to remember is that a DDoS offensive, let's say of the HTTP type, usually has a string or pattern (even if not easily discernible) that could be used to sort out attacking requests from legitimate ones. This might be, for instance, identical user agents employed by the attacking script, a mutual GET URL or POST request, or other common HTTP header parameters (http://www.tech21century.com, 2012). In this regard, the general belief among DDoS security firms is that "most attack tools have some unique HTTP characteristics that can be extracted and provide a basis for detection (Imperva, 2012, p. 15)."

It is important, however, to stress that an analysis of such attacking HTTP requests in the context of the entire session only (IP/session/user; URLs, headers, parameters) may disclose the big picture of an act that actually constitutes a DDoS attack (Imperva, 2012).

Distinguishing Between Legitimate Traffic and Layer 7 DDoS Attacks

As already mentioned, distinguishing between legitimate and malicious requests is the master key that would unlock our Enigma code, subsequently leading to positive DDoS detection and perhaps proper mitigation. The usual course is comprehensive traffic monitoring based on predefined traffic behavior profiles. These profiles are created as a result of repeatedly measured user-web site/application interactions, shaped and stored as statistical data (Miu et al., 2013). The measurements in question are:

- Measurements Based on Connections to Server

Basically, every server could follow and gather information of several units of measurement, called statistical attributes for the purpose of our discourse. The logic is simple: These statistical attributes are being accumulated gradually and recreated in reference profiles that may prove handy when the traffic is suspicious. The mold thus created is used to rule out abnormal connections that tend to exploit vital applications or server resources. Statistical attributes of importance are:

Request Rate and Download Rate

The number of requests or bytes downloaded by users within a given time interval.

Uptime and Downtime

Uptime is a figure that measures the duration of a user session, that is, the time of the user's connection to server until the moment this connection is terminated. Conversely, downtime is the interval of time when a user remains in latent mode, being disconnected in other words, until he is back online and in touch with the server. Statistical data that shows the proportions of individual protocols, length of average sessions, and frequency dissemination of TCP flags may also contribute to filling out profiles (Miu et al., 2013).

Browsing Behavior

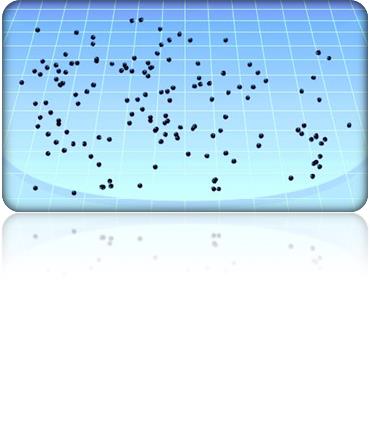

This value normally hinges on 1) the structure of the website; 2) the behavior of users. Structurally speaking, most sites consist of many web pages organized hierarchically via hyperlinks. Hence, page popularity, hyperlink access, and clicks (see Diagram 1), for example, might be statistical information worthy of remark.

Demographic Profiling

Undoubtedly, visitors from various parts of the world display heterogeneous behavior. Likewise, particular network destinations seem to cater to a specific group of clients. A surge of visitor traffic coming from Poland, for instance, to a website written in Russian would ring a bell in the DDoS department (Miu et al., 2013).

Once enough information has been gathered, the server can establish the cumulative distribution function (CDF) pertinent to normal users for all of the statistical attributes enumerated.

- Measurements Based on User-Application Interaction Monitoring

Another origin of statistical data that may help to develop a database with which to monitor traffic anomalies and predict attacking trends is the user-application interaction. Here the statistical attributes are:

Access Rate Over Time

This corresponds to uptime and downtime in the server section.

Rate per Application Resource

A counterpart to browsing behavior, except for the fact that, being a desirable aiming mark, the concentration of surveillance effort would be probably fixed on the application login page.

Geographical Locations

This is a reference figure that has the same function as demographic profiling. Again, web applications usually service a particular group of users and web administrators may collect their users' locations.

Response Latency

An indicator of when an application resource is being exhausted.

Rate of Application Responses

According to the way the application is utilized, the rate of application responses such as 500 (application errors) and 404 (page not found errors) will vary.

(Katz, 2012)

- Measurements Based on Resource Consumption

Other data sources for calibration are the state, allocation, and consumption of resources, such as CPU, memory, application threads, application states, connection tables, and more. A real-time awareness of resource consumption could be critical, since low and slow DDoS attacks strive to deplete them. An example of that would be if one beholds numerous prolonged, relatively "idle" open network connections that heavily drain resources, which might constitute an implication that the server is under a connection table misuse DDoS attack, perhaps one typical of Slowloris. In addition, an indicator of R.U.D.Y attack might be an application that lingers while processing a task that should normally be completed in an instant (Kenig, 2013).

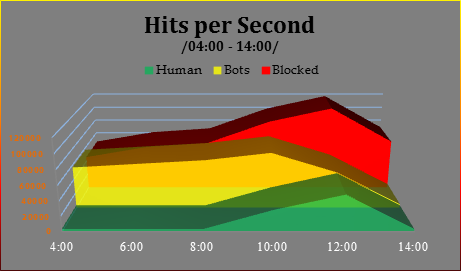

Anomaly Detection

Now that the profiles are ready and the necessary databases are stacked up and available, the detection of Layer 7 DDoS will be based on any indexes that deviate from what is expected (see Diagram 1, which reads off DDoS attack traffic based on rate per application resource, browsing behavior attributes, and other relevant data in order to detect anomalies and discriminate between human beings and "good" (yellow) /"bad" (red) bots (Chai, 2013).

Diagram 1

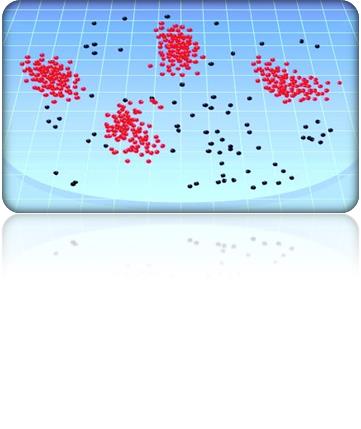

Another example will shed some light on how this framework might successfully spot anomalies through the statistical attributes, demographic profiling and geographical locations:

Diagram 2

(Beitollahi & Deconinck, 2011)

Anomaly Detection via Algorithm and Blacklisting

With regard to other measurements from the statistical attributes pack, a rate-shaping algorithm that exerts surveillance over clients might become productive by assigning values, tokens, trust units, or whatever name this indicator has. Essentially, it would ensure that clients "request no more than a configurable number of objects per time period…If the client requests more than the configurable number, the client's IP address is blacklisted for a specified time period and subsequent requests are denied until the address has been freed from the blacklist (MacVittie, 2008, par. 10)."

Blacklisting

Generally speaking, blacklisting is a simple countermeasure, a kind of short-circuit mechanism, which on the basis of IP addresses blocks suspicious or overtly malicious users. The ban, however, is not ultimate, as IP addresses may be dynamically assigned and bot computers can be remediated. Whitelisting, conversely, preapproves traffic status coming from specific IP addresses for a given period of time or amount of traffic volume. It is the digital equivalent of probation (Miu et al., 2013).

Progressive Challenges

CAPTCHA puzzles

These challenge-response tests are the pinnacle and most promising instrument in the fight against Layer 7 DDoS. Especially effective against brute-force attacks, it authenticates and whitelists users after they respond successfully to a random personal challenge via a technique dubbed optical character recognition (OCR) (Beitollahi, H. & Deconinck, G., 2011). Nevertheless, CAPTCHA has its own negative qualities and weaknesses:

JavaScript Authentication — A portion of JavaScript code built into the HTML is sent to suspicious clients. Presumably, only those who have a full JavaScript engine could initiate the computation.

HTTP Redirect Authentication — Artificially redirects HTTP 302, which discriminates legitimate browsers from automated tools.

HTTP Cookie Authentication — Here the cookie capability of the clients' browser is put to the test.

Equipment and Services for MITIGATIÄN of Layer 7 DDoS Attacks

- Non-Specialized DDoS Equipment and Services

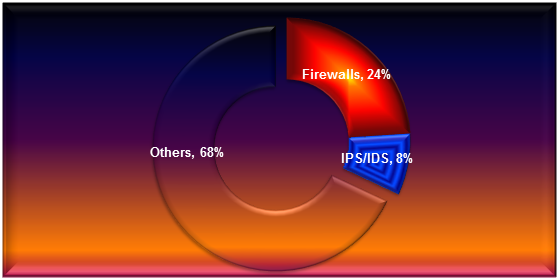

Firewall, IPS, IDS

These protection methods have a role that has more to do with preventing intrusion attempts than DDoS attacks. For an effective DDoS mitigation, an apparatus must possess a "big picture" capability to keep track of and analyze all sessions and not produce a sporadic session-by-session analysis, as this trinity does. To put it another way, these devices do not have anomaly detection capabilities (Radware, 2013).

One more nail in the coffin of these apparatuses is the fact that they are deployed in-line and too near to the server and they can suffer from resource exhaustion themselves. Furthermore, Layer 7 DDoS raids leverage a firewall weakness that enables the omission of both legitimate and illegitimate protocols and application through the standard practice of opening services as HTTPS (TCP port 443) and HTTP (TCP port 80, e.g., Code Red virus) (Prabha & Anitha, 2010).

Because of the way they function, Fs/IPS/IDS open a new connection in their connection tables for every DDoS malicious packet; in the end, this results in the depletion of connection tables, leading, in turn, to denial of service to legitimate users (Radware, 2013). Therefore, these appliances accounted for 1/3 of the outage and bottleneck service disruptions in 2011 (Herberger, 2012).

Diagram 3

Devices Contributing to Availability Problems in 2011

Web Application Firewalls (WAFs)

Designed specifically to protect websites and web applications (tech creations such as JSP, PHP, Perl, ASP, ASPX, and other common gateway interfaces), this kind of technology operates in a fashion similar to IDS and IPS, by inspecting both ingress and egress traffic to websites and information portals for signature threats, anomalies, and data leakage (for example, SQL information or credit cards), and they possess engines that learn from normal traffic patterns (http://www.osisecurity.com.au).

Moreover, by comparison with standard firewalls, a WAF provides superior content filtering and granular control over the incoming traffic, as well as advanced protection against malicious traffic that exploits each "allowed port" (Cobb). Despite all these pros, some security experts are skeptical concerning the resourcefulness of this technology, citing as reasons the difficulty of coping with increasing latency, numerous false positives, and inappropriate network location (Jirbandey, 2013), (Radware, 2013).

Diagram 4

An illustration of how DDoS specialists are in deficit over general IT "polymaths."

- Dedicated DDoS Protection Equipment and Services

- On-Premise Hardware

Pros: Specially designed and dedicated to detect and mitigate DDoS attacks, this type of appliance is usually deployed as the first device in the network, before the access router. The protection procured is automatic and immediate, and this apparatus is fine-tuned to filter well malicious Layer 7 traffic (Radware, 2013).

Cons: This on-premise hardware is costly, it requires trained security engineers, and it is dependent on regular updating. Additionally, it cannot effectively handle volumetric attacks (Leach, 2013).

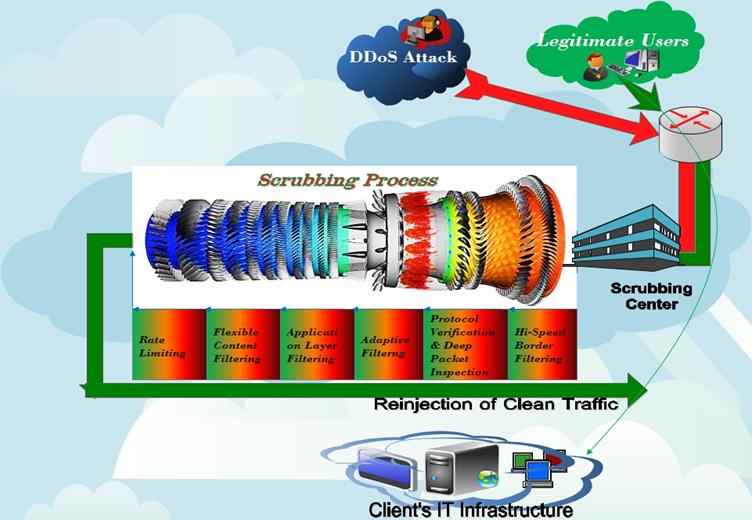

- Cloud Mitigation Provider

Benefits

Usually provided by teams with great expertise in the field, this service has as its main advantage massive amounts of bandwidth in store. Before ever reaching the customer, cloud mitigation works by redirecting poisonous traffic via the border getaway protocol (BGP) to providers' scrubbing centers. Then the traffic is "absorbed," "cleansed," and reinjected on its way to the customer (see Diagram 5).

According to some security experts, "cloud mitigation providers are the logical choice for enterprises' DDoS protection needs. They are the most effective and scalable solution to keep up with the rapid advances in DDoS attacker tools and techniques (Leach, 2013, par. 14)."

Diagram 5

Shortcomings

Owing to the fact that cloud mitigation in its nature is devised on the basis of enormity and load protection, low and slow DDoS attacks occasionally manage to slip through the net. A matter of the cloud's desensitization to earthly problems, you might say. On top of that, only the inbound traffic ever gets inspected, which gives limited visibility (Miu et al., 2013).

- Hybrid

As Radware suggest:

Hybrid DDoS solution aspires to offer best-of-breed mitigation solution by combining on-premise and cloud mitigation into a single, integrated solution…The hybrid solution also shares essential information about the attack between the on-premise mitigation devices and the cloud devices in order to accelerate and to enhance the mitigation of the attack once it reaches the cloud" (Radware, 2013, p. 6).

Content Delivery Network (CDN)

CDN providers deliver website content on behalf of customers around the globe. Owing to the dispersed server distribution of CDN providers and the size of their structure, it is vastly more difficult for DDoS attackers to overwhelm them (G.F., 2013). In terms of structure, a CDN provider might use a tiered CDN server or proxies as to store and direct the content (Zadjmool, 2013).

Criticism

The problem with this approach is that backend typically trust the CDN unconditionally, making them susceptible to attacks spoofing as traffic from the CDN. Ironically, the presence of CDN can inadvertently worsen a DDoS attack by adding its own headers occupying even more bandwidth" (Miu et al., 2013, p. 10).

Internet Service Provider (ISP)

As it seems convenient, some firms seek recourse to their ISP providers for DDoS mitigation. However, they should take into account that, in general, ISPs do not specialize in DDoS mitigation and they do not support cloud protection. The latter factor is important, because many web applications are now split between cloud services and private business data centers. Furthermore, choosing an ISP is a feasible option only if your organization is not multi-homed, i.e., using several ISPs (Leach, 2013).

Two unconventional techniques

Darknets

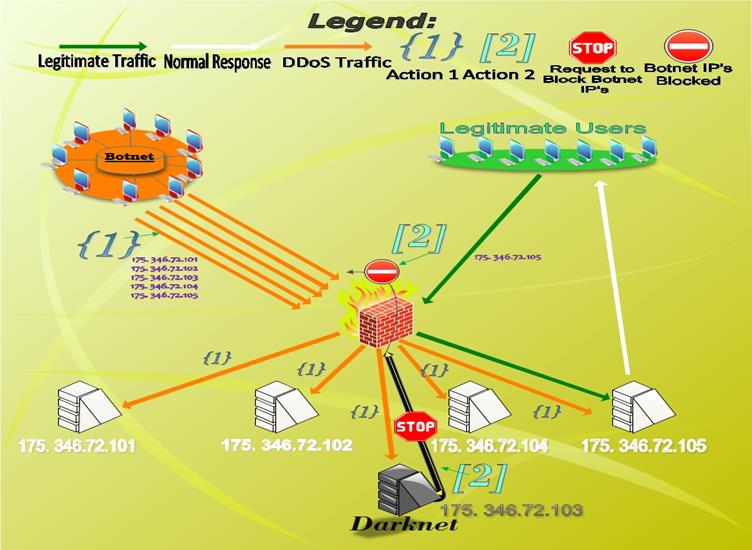

The specificity of this mitigation technique is that, unlike others, darknets should be set up before the DDoS event has commenced. In order to set up this preparatory defense measure, a customer must purchase a range of IP addresses–for instance, 175. 346.72.101-105. In this configuration, let's say that the 101, 102, 104, and 105 array of IP addresses are allocated to the web servers that support the customer's website and 103 is not, because it is kept as a darknet (Access, 2012).

Without knowing about the existing darknet IP address, a malicious actor may engage in offensive DDoS activities directed against the entire set. Subsequently, any traffic that hits the darknet is malicious traffic. On account of this fact, the security experts that try to alleviate DDoS aggression could extract the client IP addresses affecting the darknet and blacklist them, which will eventually reduce the overall attacking IP addresses and weaken the onslaught (See Diagram 6).

Diagram 6

"Lite" Sites

To go "lite" after a service has been impacted by Layer 7 DDoS, inter alia, is a rather innovative option. This mode represents a static counterpart of the dynamic web content, which could be put into action into emergency situations to display an appearance of availability. It seems that this concept is particularly fruitful against application layer DDoS that seek to saddle dynamic web services (Zadjmool, 2013).

Mitigating Specific Layer 7 DDoS Attacks and Weapons

- HTTP POST DDOS

Rate-limiting is enforced by classifying and keeping a watchful eye on the speed performance and size of each request, subsequently limiting the amount of extremely slow connections per CPU core and above the maximum allowable body (The OWASP Foundation, 2010), (F5 Networks, Inc. 2013).

- HTTP GET DDOS

First, IIS web servers or those having timeout limits for HTTP headers are usually not susceptible. Second, load balancers, reverse proxies, and Apache modules, such as mod_antiloris, might repel this kind of cyber attack. Third, measures such as "delayed binding" / "TCP Splicing" are feasible alternatives as well (The OWASP Foundation, 2010).

- Low-Orbit Ion Cannon

Hackers utilizing this tool are forced to use anonymizers because of the fact that it passes on their real IP addresses otherwise. On this account, blocking all agent nodes associated with an anonymizing service (e.g., Tor) should be considered the first step, and not in regard to LOIC only (david b, 2012). In addition, "mobile LOIC pages can be reused against more than one target, so having a list of malicious referrers might also be beneficial" (Imperva, 2012, p. 12).

- Slowloris

Renowned for its low rate and long period between headers, Slowloris may be countered with load balancers and by switching to a Microsoft-based server platform, as MS IIS under normal circumstances is not vulnerable to attack from this tool (Access, 2012).

- Slow Read

The proposal here is to configure the server to disregard connection requests having a window size that is abnormally small (Imperva, 2012).

For the sake of thorough detection-mitigation defense, an implementation of other mechanisms countering DDoS—for example, progressive challenges (CAPTCHA or Java injection)—might contribute significantly to warding off these attacks and fortifying the overall defense of the targeted entity.

Conclusion

In spite of the long list of detection and mitigation measures against Layer 7 DDoS presented here, there is no universal, all-cure, magic glue that can stitch up all security patches and holes in a given system. Besides, every particular case has its own specificity. Nevertheless, making the holes smaller is what these mechanisms are for. In the end, if the road to DDoS success is long and winding, a road to perdition, so to say, the wrongdoer might start to feel discomfort — sweaty palms, disheartenment, and paranoia that he will get stuck in some of the holes, falling prey to its own game.

Reference List

FREE role-guided training plans

- Abliz, M. (2011). Internet Denial of Service Attacks and Defense Mechanisms. Retrieved on 05/11/2013 from http://people.cs.pitt.edu/~mehmud/docs/abliz11-TR-11-178.pdf

- Access, 2012. ///////////////////////////////////////////////// /// DEFENDING AGAINST /////////. Retrieved on 05/11/2013 from https://s3.amazonaws.com/access.3cdn.net/7ba8fcbc60c8271c1c_fdm6i2vsa.pdf

- Beitollahi, H. & Deconinck, G. (2011). Tackling Application-layer DDoS Attacks. Retrieved on 05/11/2013 from http://www.sciencedirect.com/science/article/pii/S1877050912004139

- Chai E. (2013). Incapsula's Five-Ring Approach to Application Layer DDoS Protection. Retrieved on 05/11/2013 from

- http://www.incapsula.com/the-incapsula-blog/item/810-application-layer-7-ddos-protection

- Chickowski, E. (2012). Using Human Behavioral Analysis to Stop DDOS at Layer 7. Retrieved on 05/11/2013 from http://www.networkcomputing.com/security/using-human-behavioral-analysis-to-stop/240007110

- Cobb, M. Defending layer 7: A look inside application-layer firewalls. Retrieved on 05/11/2013 from http://searchsecurity.techtarget.com/tip/Defending-layer-7-A-look-inside-application-layer-firewalls

- david b.

- (2012). Generations of DoS attacks 2: Layer 4, Layer 7 and Link-Local IPv6 attacks.

Retrieved on 05/11/2013 from http://privacy-pc.com/articles/generations-of-dos-attacks-2-layer-4-layer-7-and-link-local-ipv6-attacks.html

- F5 Networks, Inc. (2013). Mitigating DDoS Attacks with F5 Technology. Retrieved on 05/11/2013 from http://www.f5.com/pdf/white-papers/mitigating-ddos-attacks-tech-brief.pdf

- G.F., (2013). How does a denial-of-service attack work? Retrieved on 05/11/2013 from http://www.economist.com/blogs/economist-explains/2013/08/economist-explains-16

- Herberger, C. (2012). 4 Massive Myths of DDoS. Retrieved on 05/11/2013 from http://blog.radware.com/security/2012/02/4-massive-myths-of-ddos/

- http://www.osisecurity.com.au. Web Application Firewalls - Layer 7 inspection. Retrieved on 05/11/2013 from http://www.osisecurity.com.au/solutions/web-application-firewalls

- http://www.tech21century.com, (2012). How to block HTTP DDoS Attack with Cisco ASA Firewall. Retrieved on 05/11/2013 from http://www.tech21century.com/how-to-block-http-ddos-attack-with-cisco-asa-firewall/

- Imperva (2012). Denial of Service Attacks: A Comprehensive Guide to Trends, Techniques, and Technologies. Retrieved on 05/11/2013 from https://www.imperva.com/docs/HII_Denial_of_Service_Attacks-Trends_Techniques_and_Technologies.pdf

- Jirbandey, A. (2013). CTO Blog – Web Application Firewalls. Retrieved on 05/11/2013 from http://www.igxglobal.com/news/cto-blog-web-application-firewalls/

- Katz, O. (2012). Protecting Against Application DDoS Attacks with BIG-IP ASM A Three Step Solution. Retrieved on 05/11/2013 from http://www.f5.com/pdf/white-papers/ddos-attacks-asm-tb.pdf

- Kenig, R. (2013). Why Low & Slow DDoS Application Attacks are Difficult to Mitigate. Retrieved on 05/11/2013 from http://blog.radware.com/security/2013/06/why-low-slow-ddosattacks-are-difficult-to-mitigate/

- Leach, S. (2013). Four ways to defend against DDoS attacks. Retrieved on 05/11/2013 from http://www.networkworld.com/news/tech/2013/091713-defending-against-ddos-273919.html

- Manthena, R. (2011). Application-layer Denial of Service. Retrieved on 05/11/2013 from

http://forums.juniper.net/t5/Security-Mobility-Now/Application-layer-Denial-of-Service/ba-p/103306 - MacVittie, L. (2008). Layer 4 vs. Layer 7 DoS Attack. Retrieved on 05/11/2013 from https://devcentral.f5.com/articles/layer-4-vs-layer-7-dos-attack#.UnpVCie735M

- Miu, T.N., Hui, A., Lee, W., Luo D., Chung, A., and Wong, J., (2013). Universal DDoS Mitigation Bypass. Retrieved on 05/11/2013 from https://media.blackhat.com/us-13/US-13-Lee-Universal-DDoS-Mitigation-Bypass-WP.pdf

- Prabha S. & Anitha R., (2010). Mitigation of Application Traffic DDoS Attacks with

- Trust and AM Based HMM Models. Retrieved on 05/11/2013 from core.kmi.open.ac.uk/download/pdf/823543

- Radware, (2013). On-Premise, Cloud or Hybrid? Approaches to Mitigate DDoS Attacks. Retrieved on 05/11/2013 from http://www.datacom.cz/files_datacom/radware_approaches_to_mitigate_ddos_attacks_wp.pdf

- Surace, K. (2013). Network vs. Apps. Retrieved on 05/11/2013 from http://www.infotech.com/research/special-letter-all-your-data-is-being-stolen-as-you-read-this

- The OWASP Foundation (2010). H.....t.....t....p.......p....o....s....t . Retrieved on 05/11/2013 from https://www.owasp.org/images/4/43/Layer_7_DDOS.pdf

- Zadjmool, R. (2013). A Risk Based Approach to DDoS Protection. Retrieved on 05/11/2013 from http://www.ccul.org/research/2013/13_0531ARiskBasedApproachtoDDoS.pdf

Diagrams

- Diagram 1 – Based on "Hits per Second" graph by Eldad Chai in Incapsula's Five-Ring Approach to Application Layer DDoS Protection. Retrieved on 05/11/2013 from

- http://www.incapsula.com/the-incapsula-blog/item/810-application-layer-7-ddos-protection

- Diagram 2 – Based on "Fig. 1: Source IP address distribution" graph provided by Beitollahi, H. & Deconinck, G. on page 4 of Tackling Application-layer DDoS Attacks. Retrieved on 13/10/2013 from http://www.sciencedirect.com/science/article/pii/S1877050912004139

- Diagram 3 – Based on "Firewall and IPS Security Devices Accounted for 1/3 of Availability Outage/Bottleneck Problems in 2011" graph by Carl Herberger in 4 Massive Myths of DDoS.

- Retrieved on 05/11/2013 from http://blog.radware.com/security/2012/02/4-massive-myths-of-ddos/

- Diagram 4 – Based on "Access to Skills" graph by Carl Herberger in 2011: Why has Group Anonymous Been So Successful? Retrieved on 05/11/2013 from http://blog.radware.com/security/2012/01/2011-why-has-group-anonymous-been-so-successful/

- Diagram 5 – Based on "Peakflow SP TMS diversion/reinjection deployment" graph provided by Arbour on page 12 of The Growing Threat of Application-Layer DDoS Attacks. Retrieve on 05/11/2013 from http://whitepapers.datacenterknowledge.com/content12127

- Diagram 6 – Based on graph provided by Access on page 7 of ///////////////////////////////////////////////// /// DEFENDING AGAINST ///////// . Retrieved on 05/11/2013 from https://s3.amazonaws.com/access.3cdn.net/7ba8fcbc60c8271c1c_fdm6i2vsa.pdf; and based on "Comprehensive Filtering" graph provided by Nexusguard. Retrieved on 05/11/2013 from http://www.nexusguard.com/solutionsclearddos.htm