Deep Packet Inspection in Cloud Containers

Cloud-Based Applications and Protocols

In the previous article, we established that security in cloud-based applications is important and searching for vulnerabilities in cloud applications is not somewhat harder or different than it is in web based applications. In this article we'll take a somewhat different approach and take a look at protocols the cloud-based applications use under the hood and will apply the deep packet inspection techniques to look for malicious traffic.

Every cloud-based application is running on top of a protocol that allows the clients to communicate with the server in a standardized way; otherwise, the client applications wouldn't be able to communicate with the server at all. If we have a room full of people and everybody speaks a different language, they won't be able to communicate. In order to reach a common goal, they would have to agree on a way to interact among themselves, thus forming a standard for communication that everybody would understand.

Learn Cloud Security

There are many protocols that we use in our daily lives when communicating with cloud-based applications, perhaps without even realizing it. The most commonly used protocols are the following:

- HTTP

- HTTPS

- DNS

- SMTP

- Custom Protocols

In order to observe the traffic coming to and going out of the cloud applications, we're going to use Docker for building, shipping and running dockerized applications. Docker is gaining in popularity and continues to be the most used container platform, which is also being used by cloud-service providers to deploy applications to the cloud; one such example is AWS, which is able to directly run Docker applications.

In order to analyze traffic coming to and going from Docker applications, we have to look at Packetbeat, which ships the traffic to Elasticsearch, which further enables the analysis and visualization of real-time traffic in Kibana.

Introduction to Packetbeat

We'll assume that we already have Docker installed and ready to use, since it's fairly easy to do so. You can check whether Docker is installed by issuing the following command:

# docker --version Docker version 1.7.1, build df2f73d-dirty

After ensuring the Docker is installed, we can install Packetbeat, which requires only libpcap, a system-independent library for network traffic capture, enabling us to capture packets as they arrive on a network interface.

We can install Packetbeat fairly easily by pulling the Docker image from the official Docker Hub.

# docker pull proteansec/packetbeat

Then we have to create the packetbeat.yml configuration file, which must include the following categories, each containing specific information to configure Packetbeat:

- Interfaces: This specifies the interface on which we would like to capture the traffic. We can use any to specify that we would like to capture traffic on all connected interfaces.

- Protocols: We can monitor only a limited number of protocols for now, including DNS, HTTP, Memcache, Mysql, Postgresql, Redis, Thrift, and MongoDB. For each protocol, we must specify on which port the application using it is running.

- Output: specifies where the analyzed traffic information will be stored, which currently supports Elasticsearch, LogStash and in a File. In most cases, we want to use the Elasticsearch output because it allows us to easily search through the analyzed data as well as present it in a nice-looking graph with Kibana.

A complete packetbeat.yml configuration file can be seen below, where we've made the assumption that Elasticsearch is accessible on the local host on port 9200. You can save packetbeat.yml anywhere on the filesystem, but it's advisable that you create the /etc/packetbeat/ directory and put the configuration file in there; this is already being done by the container itself.

interfaces: device: docker0 protocols: dns: ports: [53] include_authorities: true include_additionals: true http: ports: [80, 8080, 8000, 5000, 8002] memcache: ports: [11211] mysql: ports: [3306] pgsql: ports: [5432] redis: ports: [6379] thrift: ports: [9090] mongodb: ports: [27017] output: elasticsearch: enabled: true hosts: ["localhost:9200"] shipper: geoip:

Afterwards we can start the container with the following command, which starts the packet container by giving it access to host's network interfaces, services, etc. Below we've started the container, and then we use the docker exec command to obtain command-line access to the running container, after which we verify that the host's Elasticsearch port 9200

is actually accessible in the container itself.

# docker run -d --net=host proteansec/packetbeat # docker exec -it $(docker ps -l -q) /bin/bash root@container:/# sudo -i root@container:/# netstat -luntp Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp6 0 0 :::9200 :::* LISTEN -

To manually build the Packetbeat, we can use the following Dockerfile, which provides many configuration parameters that enable us to change how we run Packetbeat inside the container. Therefore, the Packetbeat container can be up and running with the one simple command shown below. Note that the Packetbeat is configured through the use of input parameters we supply when running docker, but additionally GeoIP database is downloaded and Kibana dashboards are automatically downloaded and applied to Kibana, so we can instantly view the graphs and analyze the traffic without much further ado.

# docker run --name packetbeat -d --net=host -d proteansec/packetbeat app:start

Note that Packetbeat container needs access to the host network, so we need to apply the "--net=host" option when running the container, which enables the container to access the interface on the host, thus making it possible to sniff the traffic coming and going to every dockerized application running on the same host.

Topbeat

Besides Packetbeat, there is also Topbeat, which, rather than analyzing the traffic coming and going to dockerized applications, rather collects and presents statistics about the other parts of the environment, mainly the CPU, memory and disk usage.

- System load

- Disk usage

- Process status

- Memory usage

- CPU usage

We can get Topbeat by running the following command, which will download the already built image from Dockerhub, but you can also download the Dockerfile manually and build the image yourselves.

# docker run --name topbeat -d --privileged -d proteansec/topbeat app:start

Note that Topbeat needs to be running in privileged mode, because it need to be able to access system information from a container, which is only possible when the container itself is run with enough privileges.

After the Topbeat container has been run, we can access the Kibana interface, which will contain a new Topbeat index pattern as presented below:

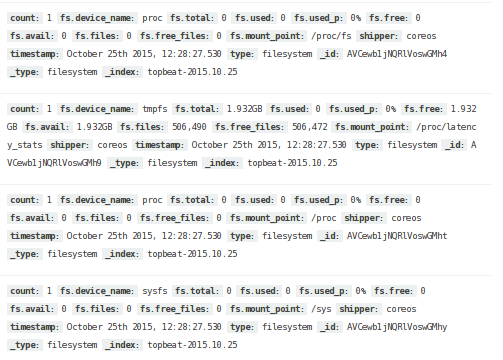

Back in the Discover tab, we can see the Topbeat entries, as shown below.

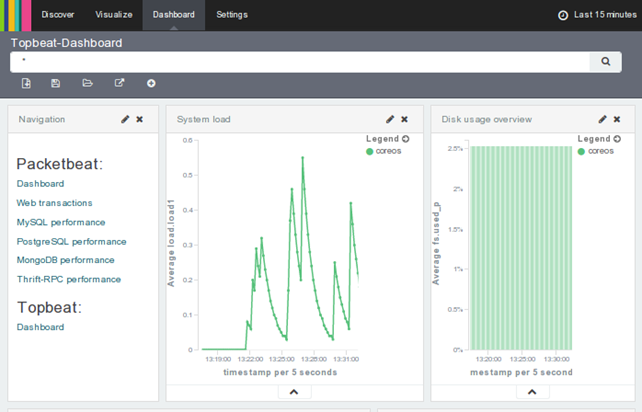

A nicer presentation of gathered data is available through the Topbeat dashboard, which can be seen below.

The Docker0 Network Interface

In the previous section, we specified docker0 network interface in the [interfaces] section of the packetbeat.conf configuration file. Note that, at the time of this writing, we can only choose a single interface with each Packetbeat instance, so if we would like to monitor multiple network interfaces at the same time, we have to instantiate new Packetbeat instances, each of which is sniffing network traffic on a single network interface and sending it to Elasticsearch. Note that we can specify any as the network interface, which will sniff the traffic on all interfaces, but that is often not what we want to achieve; also, it is currently not supported on all operating system, so it doesn't work on OS X for example.

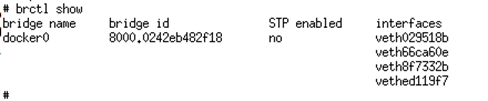

Nevertheless, we can specify docker0 network interface, which will allow us to sniff the network traffic from all container veth* network interfaces, which is exactly what we want to achieve. It's often the case that we want to sniff the network traffic of every running container in order to analyze the traffic going to services running in the containers.

When Docker starts, it creates a virtual interface docker0 and assigns it a private random IP address range, which is often set to 172.17.42/16 if available. Note the netmask is 16-bit, which means there are 65534 available IP addresses that can be assigned to any running containers [1].

The docker0 is a network bridge, which itself contains the other veth* network interfaces of running Docker containers. Because of this, the containers can communicate with the host as well as with each other in order for one service to talk to the other running in a different container. Note that all interfaces belonging to the same bridge are in the same collision domain, so when one interface broadcasts a request, it is sent over the bridge to every other network interface, any of which may respond with a packer response.

When Docker configures another container, it creates a new virtual interface with the unique name veth*, which is bound to the docker0 network interface on the host. Inside the docker container, the interface is renamed to eth0, which is then given a unique IP address from the bridge's range of available network addresses. Below we'll see that we need to give Packetbeat Docker container, the --net option, which accepts the following values:

- none: The docker container will have its own unconfigured network stack, which allows us to build our own network inside the container.

- container:name/id: The docker container will share the network stack with another container identified with NAME/ID, where the containers will be able to talk to each other though loopback interface.

- bridge: This option is the default and connects the container interface to the Docker bridge as already described.

- host: The container will share the network with the Docker host and will have access to all its network interfaces. By using this option, the container will not be able to reconfigure the host's interfaces, which would require the privilege mode to be given to the container by using "--privileged=true" option.

The docker0 interface on the host is used as the default gateway by which the containers are able to reach the Internet. Therefore, by configuring Packetbeat to listen on the docker0 network interface, we're successfully telling it to sniff every network packet coming from the Internet to any Docker container, which is exactly what we want to achieve.

Monitoring a HTTP application running in Docker

Now that we have Packetbeat up and running, we have to provide a service for Packetbeat to monitor by sniffing traffic from docker0 bridge. In our case we'll provide a static website, which is a great example for testing the Packetbeat capabilities.

Let's first create the /home/core/website and copy some static website content into that directory. After that, we should run the nginx container mounting the static website content into the Nginx html directory, which can be done with the -v option. Note that we've also exposed the container port, so we can access the website running in that container on HTTP port 80.

# docker run -p 80:80 -v /home/core/website:/usr/share/nginx/html:ro -d nginx

Afterward, we should connect to the Nginx container, where our website application is running. We can see that Protean Security website is running in the container as can be seen on the picture below.

In order to get details about the Packetbeat data in Elasticsearch, we can issue the following command, which will present a pretty printed information Packetbeat has gathered so far:

# curl -XGET 'http://localhost:9200/packetbeat-*/_search?pretty'

By navigating through the website, all the HTTP queries will be gathered by Packetbeat and stored in Elasticsearch.

Viewing Analyzed Data through Kibana Graphs

The exposed RESTful API interface is great, but fails to provide a quick and nice-looking way of presenting what is happening in our network. To solve that, we can use Kibana, where dashboards can be instantiated in order to see the gathered data quickly and instantly tell what is happening on our network.

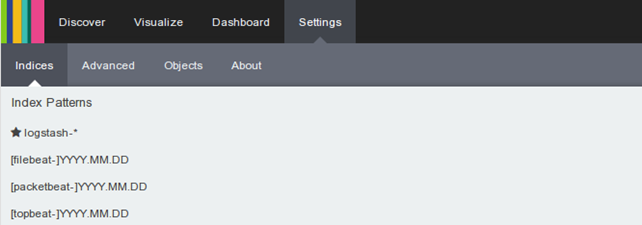

First, we must go to "Settings – Indices," where new indices will be shown as below.

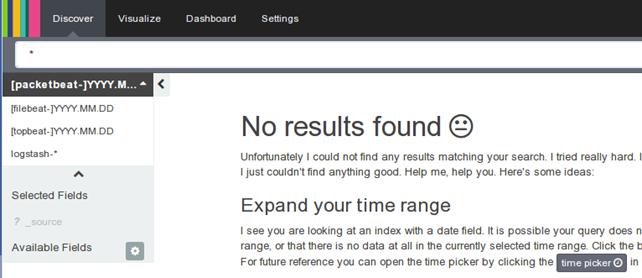

Back in the "Discover" tab, we can select the index pattern we would like to use by selecting it from the drop-down menu. Each data entry stored in Elasticsearch has an index pattern that identifies the Elasticsearch indices we would like to explore. To display all indices defined by Elasticsearch, we can run the following command:

# curl 'localhost:9200/_cat/indices?v'

Note that in Discover tab, we can select the index pattern "packetbeat-" in a drop-down list to view the gathered data.

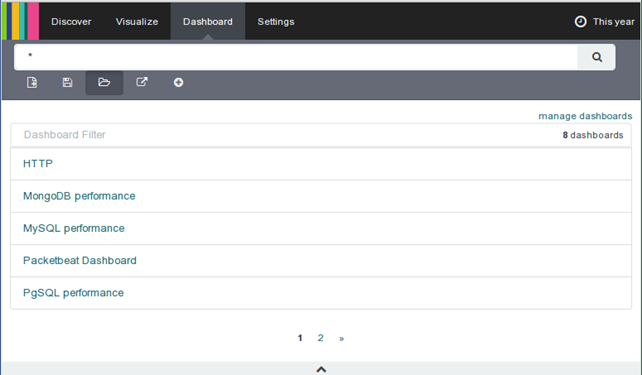

To install the dashboards from the beats-dashboards Github repository, we can simply clone the repository and run the "./load.sh http://localhost:9200" command to load the dashboards (this is already done by the docker images presented in the previous section, so there's no need to do it again). Then, back in Kibana, we can go to "Dashboard – Load Saved Dashboard" to see newly added dashboards, as shown below.

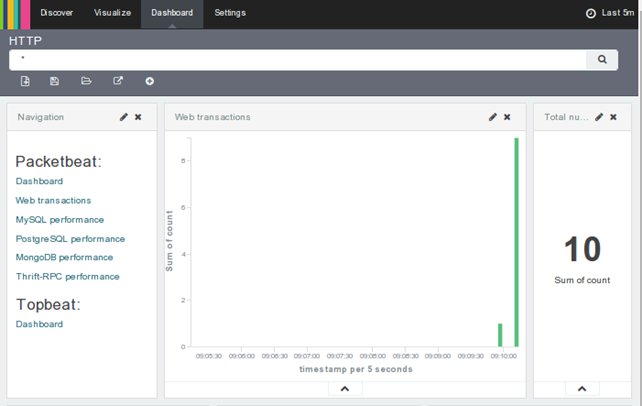

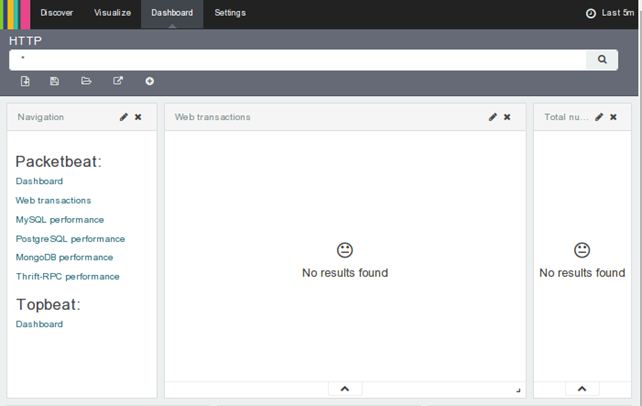

If we click on the HTTP dashboard, we can see that a new dashboard will open, which also contains the navigation page, where we can select different dashboards directly. At this time, there is no data present in the Kibana interface, as is shown below.

Now we have to test two use-case scenarios. First we have to visit the website from outside of the Docker infrastructure, which can be easily achieved by visiting the website from another machine altogether. After doing that, we can go to the Kibana interface to see whether Packetbeat has gathered any HTTP requests in the last couple of minutes—we can indeed see that there are 10 requests present in the interface. This verifies that Packetbeat is able to sniff the packets coming from the Internet to a Docker container.

We also have to verify that Packetbeat can monitor requests coming from another Docker container running on the same physical host. But instead of specifying the external IP address, we'll rather specify the internal IP address of the container. Basically, we have to run "docker exec -it ID /bin/bash" and execute the ifconfig command in the Docker container running Nginx to discover the internal IP address of that container. Then we have to issue the same command to gain command-line interface to some other container running on the same host and executing the "curl http://172.17.0.11/" command to download the website HTML source code. Note that curl is not capable of parsing the returned website HTML, so no additional requests for images, JavaScript/CSS files will be made, which means only one request will be generated.

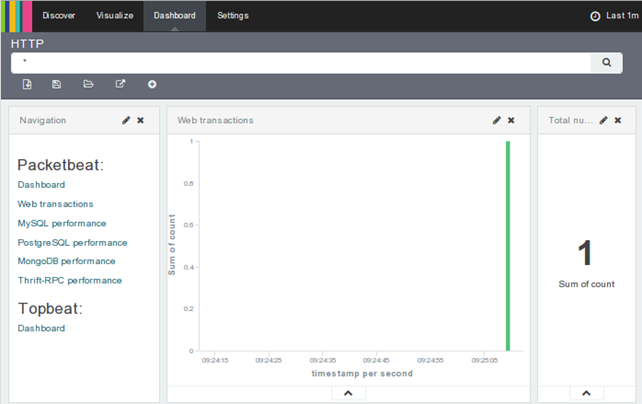

If we set the timer in Kibana web interface to the last number of minutes (1 minute is good enough) where no requests have been generated, the counter of requests will again be back to zero. Then we can issue the curl command, which will generate 1 request as shown below.

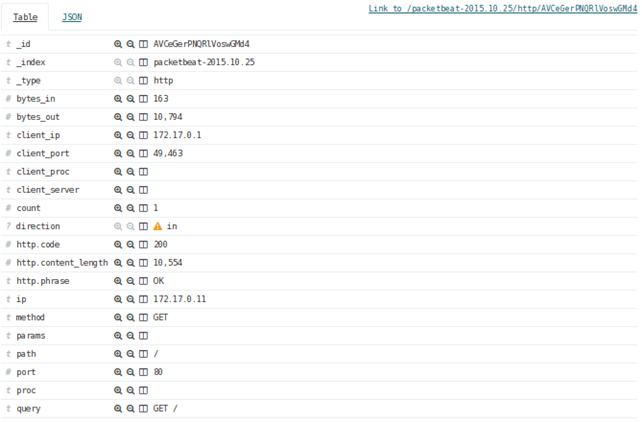

If we view the details of a request in the "Discover" tab, the following information is available (note that only the most useful fields are shown in the picture below), where we can see that a "GET /" request was generated from IP address 172.17.0.1 to IP address 172.17.0.11 (where our website is present in the nginx Docker container). The GET response returned a HTTP status code 200, which contained 10554 bytes of data.

Conclusion

In this article we've seen that we can gather a lot of useful information about packets flowing to and from our Docker container, which can be beneficial to discover any anomalies that can be further analyzed for potential threats.

This exercise proved that we can use Packetbeat for our defense, because of its capabilities to sniff traffic from Docker containers. Note that many cloud-based applications are running in Docker containers, so sniffing the packets to analyze them or possibly pipe them to an IDS/IPS proves beneficial to discover malicious traffic and block the attacker as soon as possible.

Learn Cloud Security

References

[1] Network configuration, https://docs.docker.com/articles/networking/ .